How to Implement AIOps: A 5-Step Roadmap for IT Teams

Amartya Gupta

What is AIOps implementation? AIOps implementation is the process of deploying AI and machine learning capabilities across IT operations — ingesting telemetry data, training models on your environment's behavior, and automating alert correlation, root cause analysis, and incident response.

Most AIOps implementations fail. Not because the technology doesn't work — but because teams try to boil the ocean on day one. They buy a platform, point it at everything, and expect magic. Six months later, the ML models are undertrained, the team doesn't trust the outputs, and the license renewal conversation gets awkward.

The teams that succeed take a different approach. They start with one pain point. They prove value fast. Then they expand. This guide covers the practical steps to get there — based on what actually works in production, not what looks good in a vendor demo.

Why Most AIOps Implementations Fail — And How to Avoid It

Before diving into the roadmap, it's worth understanding the three most common failure patterns. Knowing what kills AIOps projects helps you design one that survives.

Failure 1: Boiling the Ocean

Teams try to cover every domain — network, application, infrastructure, security — from day one. The data volume overwhelms the platform before the ML models have time to learn. Result: a lot of noisy, untuned outputs that nobody trusts.

Fix: Start with one domain. If alert fatigue is your biggest pain, start with infrastructure alerts. Let the models learn. Prove value. Then add application and network data.

Failure 2: No Clear Success Metrics

"Improve IT operations" isn't a measurable goal. Without defined KPIs, teams can't tell whether the platform is working — and leadership can't justify the investment.

Fix: Define 3-4 metrics before you deploy. Noise reduction ratio. MTTR for P1 incidents. Automation rate for tier-1 issues. Time to root cause.

Failure 3: Technology Without Adoption

The platform works. The models are trained. But the operations team still uses their old workflow because nobody trained them, the alerts go to the wrong channel, or the interface isn't integrated into their daily tools.

Fix: Invest in change management. Integrate AIOps outputs into existing ITSM workflows, Slack channels, and on-call tools. Make it easier to use the platform than to work around it.

AIOps Readiness Checklist: Before You Start

Not every organization is ready for AIOps on day one. Here's what needs to be in place:

Readiness Factor | Minimum Requirement |

|---|---|

Data sources | At least 3 telemetry sources (metrics, logs, events) accessible via API or agent |

Data quality | Clean enough for ML — consistent timestamps, labeled sources, reasonable retention |

Tool inventory | Documented list of current monitoring, logging, and ITSM tools |

Team buy-in | Operations and engineering teams willing to pilot new workflows |

Executive sponsor | Someone who can protect the project budget through the 3-6 month learning curve |

Success criteria | Defined KPIs that everyone agrees on before deployment begins |

If you're missing two or more of these, spend a month getting them in place before you start evaluating platforms.

Step 1: Align to a Specific Business Problem

AIOps is a multi-domain technology. It can address alert fatigue, incident response speed, capacity planning, change impact analysis, and more. Trying to solve all of them at once is the fastest path to an underperforming deployment.

Pick one problem. The best starting points are:

Alert fatigue: Your NOC handles 5,000+ alerts per day and engineers are burning out.

Slow MTTR: P1 incidents take 4+ hours to resolve because root cause analysis is manual.

Manual correlation: Your team spends 30+ minutes per incident toggling between dashboards to piece together what happened.

Tool sprawl: You run 10+ monitoring tools and none of them give you a unified view.

Define what success looks like for that specific problem. "Reduce P1 MTTR from 4 hours to under 1 hour within 90 days" is a goal you can measure. "Improve operations" is not.

Step 2: Integrate Data Sources Broadly

AIOps runs on data. The more sources you connect, the better the ML models perform — because cross-domain correlation is where the real value lives.

What to Connect First

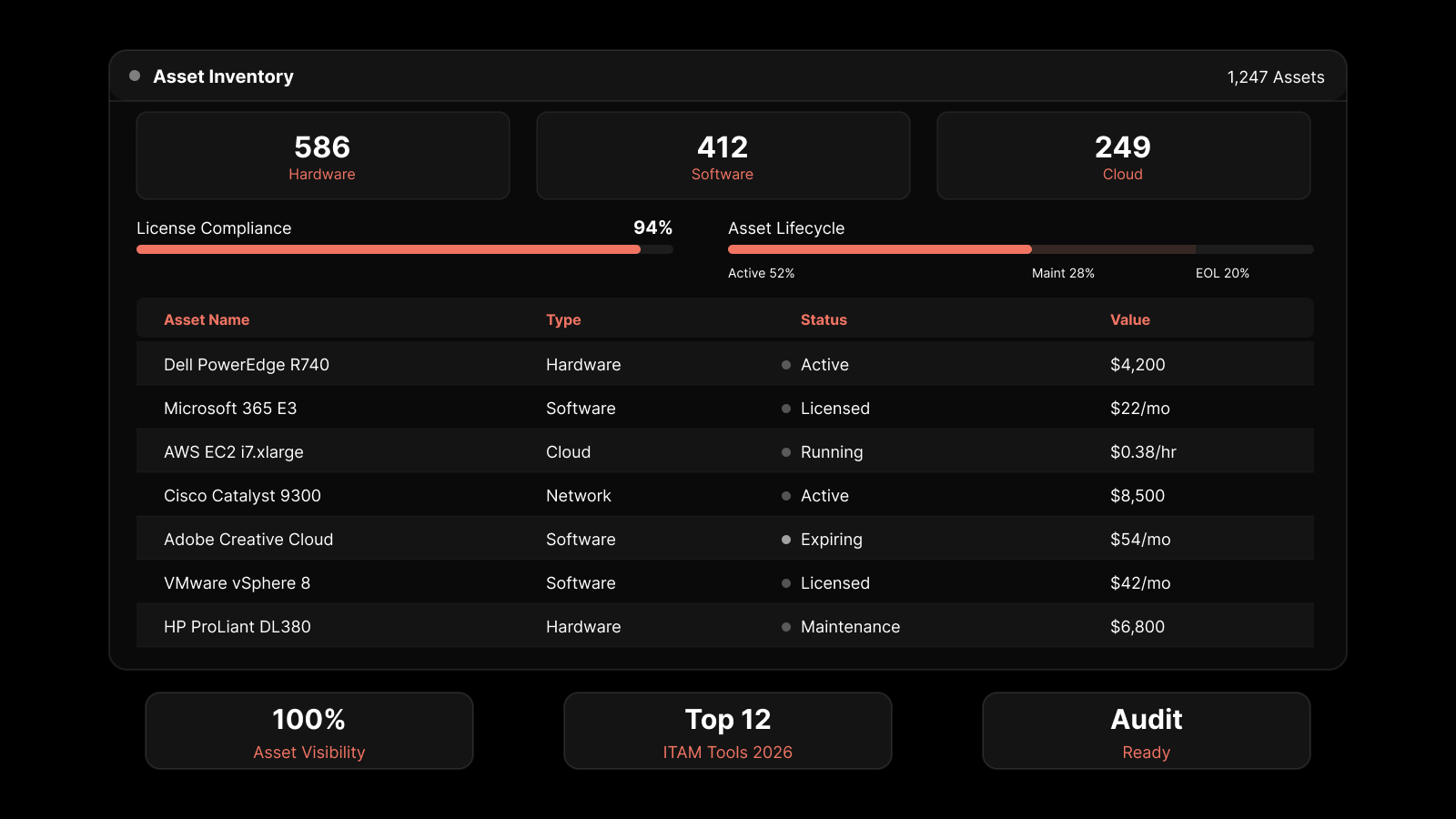

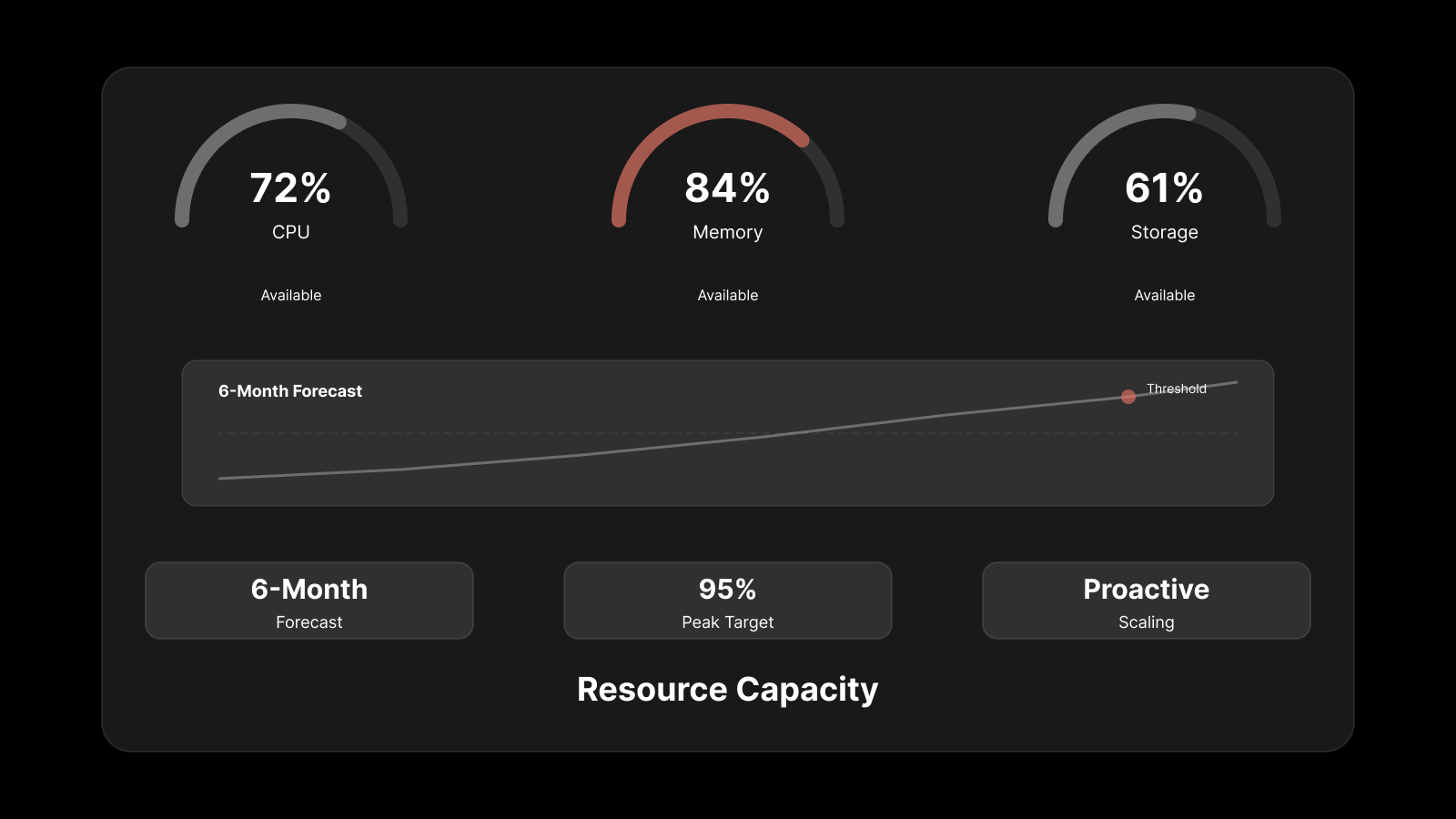

Infrastructure metrics: CPU, memory, disk, network utilization from servers, VMs, and containers

Logs: System logs, application logs, security logs — the raw signal your infrastructure produces

Events and alerts: From every monitoring tool in your stack — Motadata AIOps ingests data from any source via standard protocols

Flow and network data: NetFlow, sFlow, SNMP data for network visibility

Change records: Deployment logs and ITSM change tickets — essential for correlating incidents with recent changes

Data Integration Best Practices

Normalize timestamps: ML models need consistent time data. Ensure all sources use UTC or synchronized NTP.

Label sources clearly: Every data stream should identify its origin (host, service, environment, region).

Set reasonable retention: 30-90 days of historical data gives ML models enough to establish baselines.

Start with APIs, not agents: Where possible, use API-based integrations to minimize infrastructure changes during pilot.

Step 3: Enable AI-Driven Analysis

Once data is flowing, enable the ML capabilities:

Anomaly Detection

Let the platform learn baselines for 2-4 weeks before acting on anomaly alerts. This training period is essential — models that haven't seen normal behavior will flag everything as abnormal.

Anomaly detection works best when it has enough context. A CPU spike at 2 PM on batch-processing day isn't an anomaly. The same spike at 3 AM on a Tuesday is.

Event Correlation

Configure event correlation rules to group related alerts. A single storage failure shouldn't generate 200 independent tickets. The AIOps platform should recognize these as one correlated incident.

Root Cause Analysis

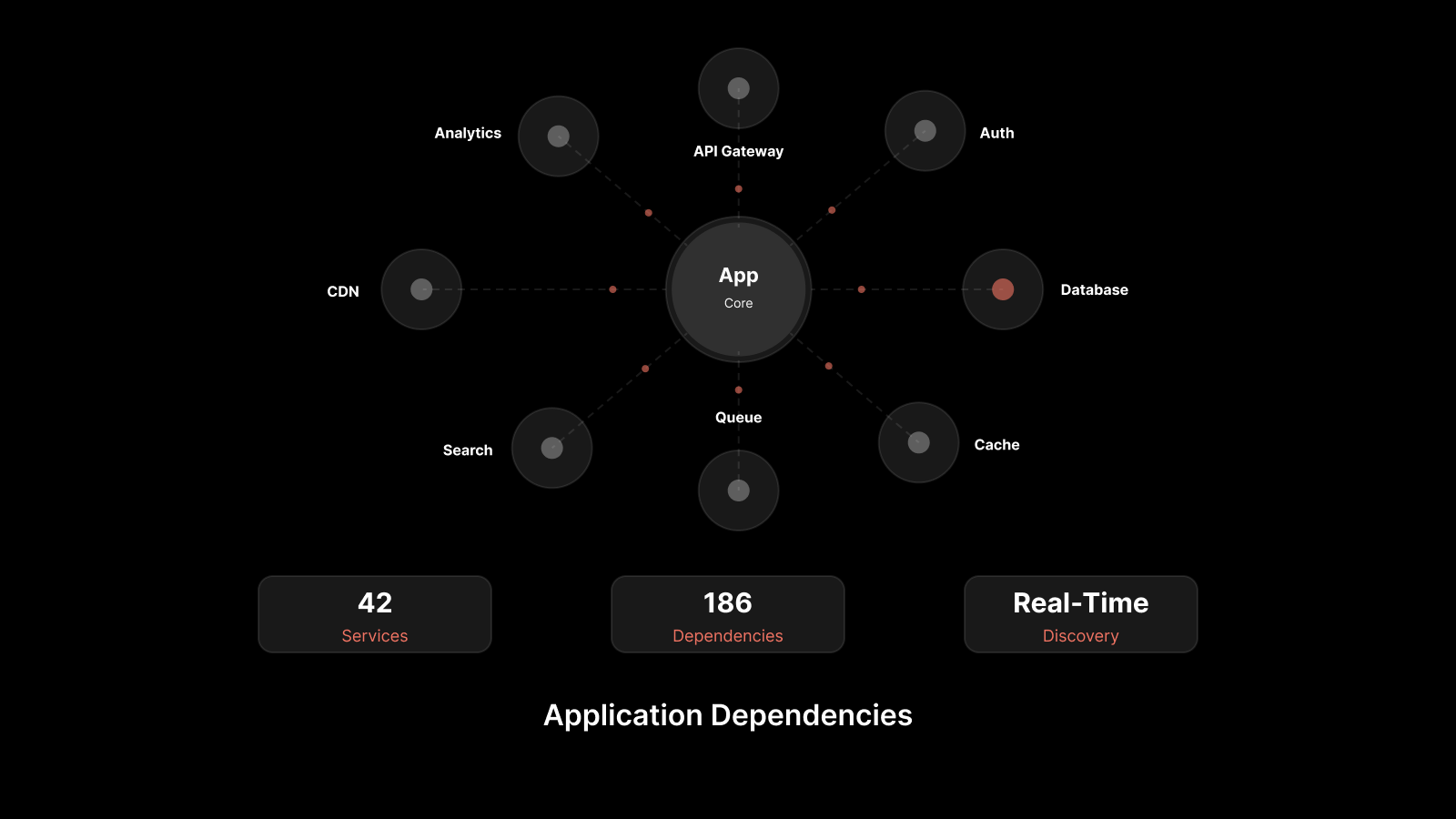

Enable topology-aware RCA so the platform understands service dependencies. When a database goes down and three applications fail, the platform should identify the database as the root cause — not raise four separate incidents.

Step 4: Build a Unified Data Foundation

This is the "data lake" step — but the goal isn't just storing data. It's making data queryable across domains so AI models can find patterns humans can't.

What This Looks Like in Practice

Single query interface: Engineers can search metrics, logs, and events from one place

Cross-domain dashboards: One view showing infrastructure health, application performance, and network status

Historical analysis: Ability to replay incidents with full telemetry context from all sources

The unified data foundation is what separates "AIOps" from "monitoring with ML sprinkled on top." Without it, your models only see a slice of the picture.

Step 5: Automate Remediation for Known Issues

This is where AIOps pays for itself. Once you've identified recurring issues through ML analysis, build automated responses:

Tier-1 automation: Service restarts, log rotation, disk cleanup, process kills for known failure patterns

Escalation automation: Auto-route correlated incidents to the right team with full context — no more manual triage

Runbook execution: Trigger pre-built remediation scripts when the platform detects specific anomaly patterns

Start conservative. Automate the actions your team does 10+ times per week with zero variation. As confidence grows, expand to more complex scenarios.

Automation Maturity Levels

Level | Description | Example |

|---|---|---|

Notify | Alert fires, human investigates | "CPU anomaly detected on web-03" |

Enrich | Alert fires with full context attached | Same alert + recent changes, dependency map, related logs |

Suggest | Platform recommends action | "Similar incidents resolved by restarting nginx. Execute?" |

Execute | Platform takes action autonomously | Auto-restart nginx, verify health, close ticket |

Most teams should aim for Level 3 (Suggest) within 6 months. Level 4 (Execute) should be reserved for well-understood, low-risk actions.

How to Measure AIOps Success

Track these KPIs monthly and trend them over time:

KPI | Formula | Target |

|---|---|---|

Noise reduction ratio | 1 - (actionable incidents / raw alerts) | 90%+ |

MTTR | Avg time from alert to resolution | 50% reduction within 6 months |

Automation rate | Auto-resolved incidents / total incidents | 30%+ within 12 months |

Time to root cause | Avg time from incident start to RCA | Under 15 minutes for known patterns |

Cost per incident | Total ops cost / incidents handled | 40% reduction within 12 months |

If you're not improving on these metrics quarter over quarter, something in your implementation needs adjustment — usually data coverage or team adoption.

AIOps Implementation Timeline: What to Expect

Phase | Duration | Milestone |

|---|---|---|

Planning | 2-4 weeks | Problem defined, KPIs set, platform selected |

Data integration | 2-4 weeks | 3+ data sources connected, data flowing |

ML training | 2-6 weeks | Baselines established, initial anomaly detection tuned |

Pilot operations | 4-8 weeks | Team using platform for one domain, initial MTTR improvements |

Expansion | Ongoing | Additional domains, automation rules, cross-team adoption |

Total time to first measurable value: 8-16 weeks. Full maturity with automation: 6-12 months.

What IT Leaders Should Also Know About AIOps Adoption

How much data does AIOps need to be effective?

At minimum, 2-4 weeks of historical data across 3+ sources. More data and more sources improve correlation accuracy. The platform performs best when it can see metrics, logs, and events together — not just one telemetry type.

Can we implement AIOps without replacing our existing tools?

Yes. Most AIOps platforms work as an overlay, ingesting data from existing monitoring, logging, and APM tools via APIs. You keep your current stack and add the AI/ML correlation layer on top.

What skills does our team need for AIOps?

Your existing operations team can run AIOps. The platform handles the ML complexity. What you do need is someone who can map data sources, define correlation rules, and translate business requirements into measurable KPIs.

What's the biggest risk in AIOps implementation?

Under-investing in adoption. The platform will produce insights, but if your team doesn't change their workflow to use them, you've paid for an expensive dashboard nobody checks.

How Motadata AIOps Accelerates Time to Value

Motadata AIOps is built for teams that need fast time-to-value without a six-month consulting engagement. The platform ingests metrics, logs, flows, and APM data from any source via pre-built integrations, with ML models that establish baselines within weeks — not months.

What makes Motadata practical for phased implementations: out-of-the-box anomaly detection, automated event correlation, and dynamic topology mapping that understands your service dependencies from day one. Teams typically achieve 90%+ noise reduction within the first month of production use.

If you're planning an AIOps rollout and want to see how fast the platform can learn your environment, request a demo.

FAQs

How long does AIOps implementation take?

Expect 8-16 weeks from planning to first measurable value. Data integration takes 2-4 weeks, ML model training needs 2-6 weeks for baseline establishment, and pilot operations run 4-8 weeks. Full maturity with cross-domain automation typically takes 6-12 months.

What's the most common AIOps implementation mistake?

Trying to cover too many domains at once. The most successful deployments start with one specific pain point — usually alert fatigue or slow MTTR — prove value there, then expand. Starting broad means the ML models take longer to train and the team never builds confidence in any single capability.

How do I know if my organization is ready for AIOps?

You need at least three accessible data sources (metrics, logs, events), a team willing to pilot new workflows, an executive sponsor, and clear success criteria. If you manage more than 200 devices and your team deals with alert fatigue, you're a strong candidate.

Can AIOps work with our existing monitoring and ITSM tools?

Yes. Platforms like Motadata AIOps integrate with existing tools via APIs and standard protocols (SNMP, syslog, REST APIs). You don't need to rip and replace — AIOps sits on top of your current stack and adds the AI/ML layer.

What metrics should I track to measure AIOps ROI?

The four most important metrics are noise reduction ratio (target 90%+), mean time to resolution improvement (target 50% reduction), automation rate for tier-1 issues (target 30%+), and time to root cause (target under 15 minutes for known patterns).