How Observability Improves Data Quality for AI/ML Model Training

What is data observability for AI/ML? Data observability applies real-time monitoring, anomaly detection, and lineage tracking to data pipelines — ensuring training data remains accurate, complete, and unbiased throughout the ML lifecycle. It catches quality issues before they corrupt your models.

Every ML model is only as good as the data it trains on. A fraud detection model that misclassifies transactions. A recommendation engine that suggests irrelevant products. A predictive maintenance system that misses equipment failures. In every case, the root cause is the same: bad training data.

The problem isn't that teams don't care about data quality. It's that modern ML pipelines are too complex to monitor manually. Data flows through dozens of transformations, joins, and enrichment steps before it reaches a training job. A schema change in an upstream source, a failed ETL job, a gradual shift in data distributions — any of these can silently corrupt your training data. And you won't know until the model starts producing garbage predictions in production.

That's where observability changes the equation. According to IDC, 30-40% of AI/ML projects fail due to poor data quality. Observability gives ML teams the same deep visibility into their data pipelines that infrastructure teams have had for years — and it's the difference between catching problems before training starts and debugging them after deployment.

Why Data Quality Makes or Breaks ML Models

Machine learning algorithms are pattern recognition engines. They learn from the data you feed them. When that data contains errors, gaps, biases, or inconsistencies, the model learns the wrong patterns — and it does so with confidence.

Here's what poor data quality actually costs:

Garbage In, Confident Garbage Out

A model trained on incomplete customer data won't just underperform. It'll make predictions with high confidence that are systematically wrong. A classification model trained on mislabeled data doesn't hedge — it categorizes with conviction, just incorrectly.

Bias Amplification

Training data that underrepresents certain populations doesn't produce a slightly biased model. It produces a model that systematically discriminates. An HR screening model trained on historical hiring data that skewed male will rank male candidates higher — not because it's "biased" in a human sense, but because that's what the data taught it.

Compounding Pipeline Errors

In complex ML pipelines, one data quality issue cascades. A missing field in the ingestion layer becomes a null value in feature engineering, which becomes a skewed distribution in the training set, which becomes a degraded model in production. Each stage amplifies the original error.

The Cost of Late Discovery

Finding a data quality issue during model development costs hours of debugging. Finding it after deployment costs revenue, reputation, and trust. According to Gartner, organizations lose an average of $12.9 million annually due to poor data quality — and that figure rises sharply when ML models make automated decisions based on that data.

What Data Observability Actually Means for ML Teams

Data observability isn't just another monitoring dashboard. It's a systematic approach to understanding the health of your data across every stage of the ML pipeline.

Traditional data quality tools rely on predefined rules — check for nulls, validate value ranges, enforce schema constraints. They catch the issues you've already thought of. Data observability goes further by applying ML-based anomaly detection to your data itself, catching the issues you haven't anticipated.

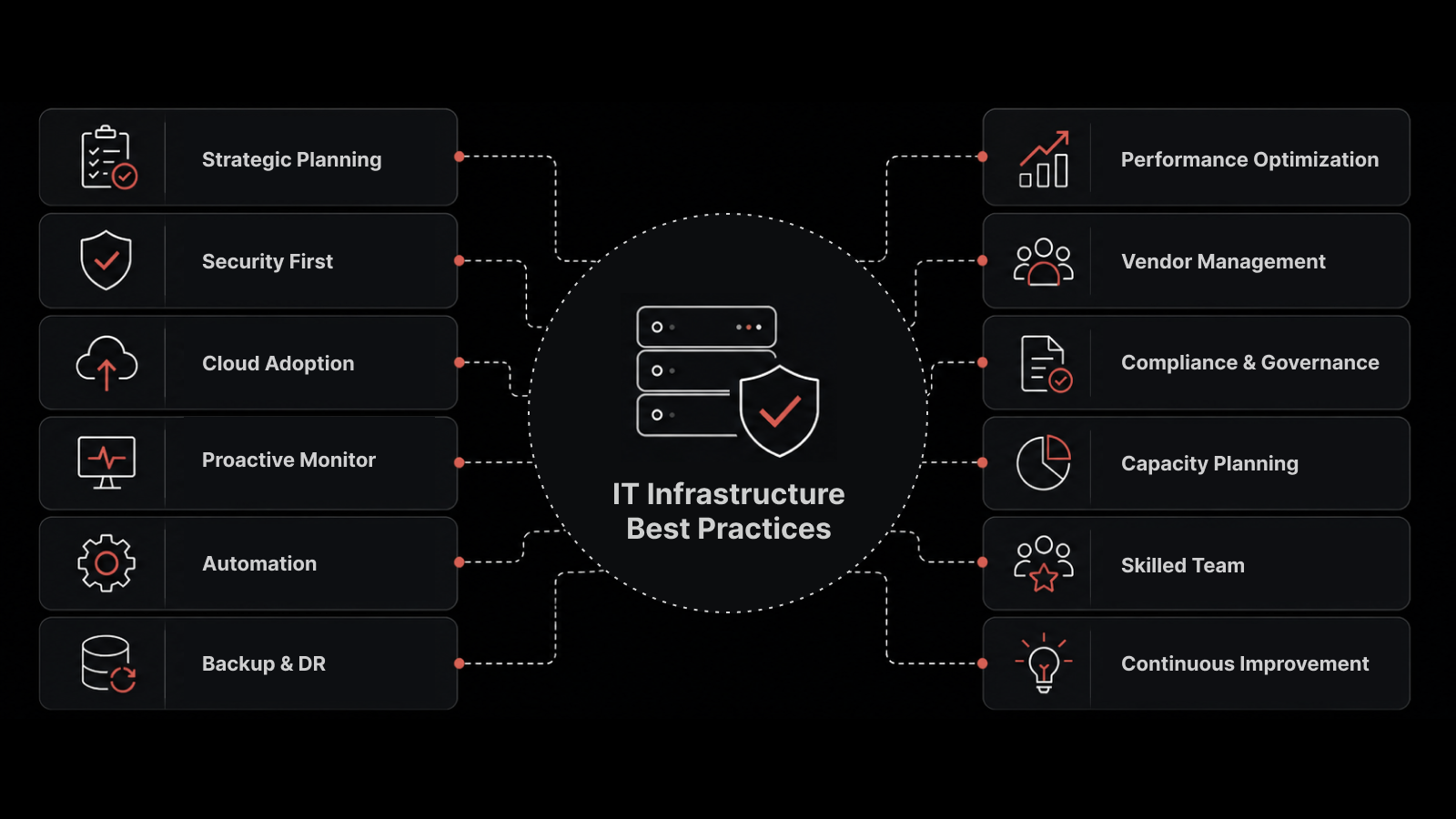

The Five Pillars of Data Observability

Freshness: Is data arriving on time? A training pipeline that expects hourly data updates but receives stale data will train on outdated patterns. Observability monitors ingestion schedules and alerts when data stops flowing or arrives late.

Volume: Is the expected amount of data present? A sudden drop in row counts might indicate a failed upstream job. A sudden spike might indicate duplicate ingestion. Both corrupt training data.

Schema: Has the data structure changed? An upstream team renames a column, changes a data type, or adds a field. Without schema monitoring, your pipeline either breaks loudly (best case) or silently produces wrong results (worst case).

Distribution: Do the statistical properties of your data match expectations? This is where you catch data drift — the gradual or sudden shift in data distributions that degrades model performance over time.

Lineage: Where did this data come from, and what happened to it? Lineage tracking lets you trace any data quality issue back to its source, understand which transformations touched it, and determine which downstream models are affected.

How Observability Detects Data Quality Issues in ML Pipelines

Observability doesn't just tell you that data quality has degraded. It tells you where, when, and why — often before the degradation reaches your training pipeline.

Real-Time Anomaly Detection

Observability platforms continuously analyze incoming data against learned baselines. When a numerical field that typically ranges from 0-100 suddenly includes values above 10,000, the system flags it immediately. When a categorical field that normally has 15 distinct values suddenly has 50, that's a signal.

This isn't rule-based checking. It's ML-driven pattern recognition applied to your data, adapting as your data naturally evolves while still catching genuine anomalies.

Pipeline Bottleneck Identification

Data pipelines aren't just about correctness — they're about timing. A feature engineering job that typically runs in 20 minutes but suddenly takes 3 hours affects your training schedule. Observability monitors pipeline performance metrics — execution time, resource consumption, throughput — and identifies bottlenecks before they cause downstream failures.

Cross-Pipeline Correlation

A data quality issue in one pipeline often manifests as a different problem in another. A delayed data source causes missing joins, which causes null values in a feature table, which causes model training to produce lower accuracy. Observability platforms correlate events across pipelines to surface the root cause rather than the symptom.

Automated Data Profiling

Every batch of data that flows through your pipeline gets profiled automatically. Row counts, null percentages, value distributions, cardinality — all tracked over time. When a profile deviates from the norm, you know before your model does.

Data Drift: The Silent Model Killer Observability Exposes

Data drift deserves its own section because it's the most insidious data quality problem in production ML. Unlike a schema break or a null value flood, drift happens gradually. Your model doesn't suddenly stop working — it slowly becomes less accurate, and by the time anyone notices, the damage is done.

What Causes Data Drift

Concept drift: The relationship between input features and the target variable changes. A pricing model trained during normal economic conditions won't work during a recession because the underlying relationship between features (inventory levels, demand signals) and price has shifted.

Covariate shift: The distribution of input features changes even though the underlying relationship stays the same. A credit scoring model trained on applicants from one demographic region gets deployed to a different region where income distributions and spending patterns differ.

Upstream data changes: A data provider changes their methodology, a new data source gets added to the pipeline, or an existing source changes its formatting. The data still arrives, but it no longer means what it used to.

How Observability Catches Drift

Observability platforms monitor feature distributions continuously. They track statistical measures — mean, variance, skewness, kurtosis — for every feature over time. When distributions shift beyond configurable thresholds, alerts fire.

More sophisticated platforms use statistical tests — Kolmogorov-Smirnov, Population Stability Index, Jensen-Shannon divergence — to quantify drift magnitude and determine whether retraining is needed.

The key advantage over manual checks is coverage. A team might monitor 5-10 critical features manually. Observability monitors all of them, all the time.

Five Data Quality Problems Observability Catches Before Training Starts

1. Missing and Null Value Spikes

An upstream API changes its response format and stops populating three fields your feature engineering depends on. Without observability, those nulls flow through your pipeline, get imputed or dropped during preprocessing, and produce a subtly different training set. With observability, the null rate spike triggers an alert within minutes of the first affected batch.

2. Duplicate Records

A retry mechanism in your data ingestion layer fires twice due to a timeout, doubling a batch of records. Your model now overweights that data. Observability detects the volume anomaly and flags the duplicate keys.

3. Label Quality Degradation

In supervised learning, label quality matters as much as feature quality. When a labeling team changes, annotation guidelines shift, or automated labeling logic contains a bug, label distributions change. Observability tracks label distributions over time and catches shifts before they corrupt your next training run.

4. Stale or Late-Arriving Data

A daily feature pipeline that depends on data from an external partner runs at 6 AM. But the partner's data delivery slips from 5 AM to 7 AM. Your pipeline runs with yesterday's data. Observability monitors data freshness and blocks training jobs that would use stale inputs.

5. Schema Evolution Breaks

A database migration adds a new enum value to a categorical field. Your one-hot encoding pipeline doesn't handle the new value and silently drops it. Observability tracks schema changes and alerts when upstream schemas diverge from expected definitions.

Building a Data Observability Practice for ML Teams

Implementing data observability doesn't require a massive overhaul. It works best as a progressive build-out, starting with the highest-risk pipelines.

Step 1: Map Your Critical Data Pipelines

Identify the pipelines that feed your highest-impact models. If a fraud detection model protects $50M in transactions monthly, its training pipeline gets observability first.

Step 2: Establish Data Baselines

Before you can detect anomalies, you need baselines. Run your observability platform against 30-60 days of historical data to establish normal ranges for freshness, volume, schema, and distributions.

Step 3: Instrument Pipeline Stages

Add observability checkpoints at critical stages — after ingestion, after transformation, after feature engineering, before training. Each checkpoint validates data health before passing it downstream.

Step 4: Set Up Alerting and Escalation

Not every anomaly needs a 2 AM page. Configure severity levels based on business impact. A 5% volume drop in a low-priority pipeline gets a Slack notification. A distribution shift in a production fraud model's training data gets an immediate page.

Step 5: Integrate with Your ML Workflow

Connect observability alerts to your ML workflow tooling. When a data quality issue is detected, automatically hold affected training jobs, notify the data engineering team, and log the incident for root cause analysis.

What ML Engineers Should Also Know

How does data observability differ from traditional data validation?

Traditional data validation runs predefined checks — null constraints, range validations, referential integrity. It catches the issues you've anticipated. Data observability adds ML-driven anomaly detection that catches unexpected issues, plus continuous monitoring that runs between scheduled validation jobs. Think of validation as a checkpoint and observability as continuous surveillance.

Can observability prevent model bias in training data?

Observability can detect statistical imbalances that often lead to bias — underrepresented classes, skewed feature distributions, label imbalances across demographic segments. It won't tell you whether a bias is ethically problematic (that requires domain expertise), but it will tell you when your data's demographic profile has shifted from your established baselines.

What's the relationship between data observability and MLOps?

Data observability is a foundational layer of mature MLOps practices. MLOps covers the full lifecycle — experimentation, training, deployment, monitoring. Data observability specifically focuses on ensuring the data flowing into that lifecycle is trustworthy. Without data observability, you're building MLOps on an unreliable foundation.

How much data observability do you need for real-time ML systems?

Real-time ML systems need real-time data observability. If your model makes predictions on streaming data, your observability needs to monitor that stream with the same latency. Batch models need batch-level observability. The monitoring cadence should match the data cadence.

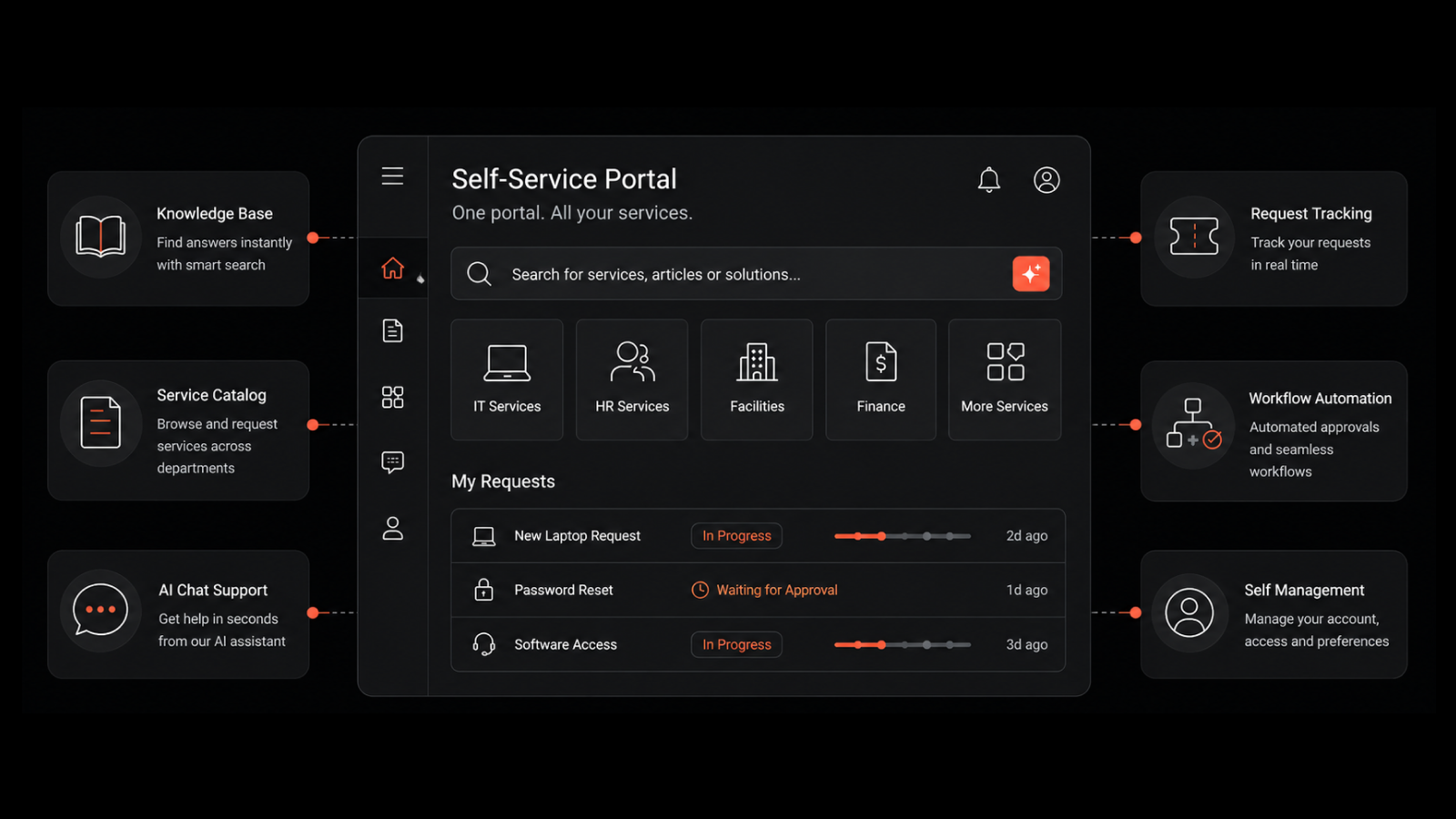

How Motadata Observability Strengthens AI/ML Data Pipelines

Motadata's observability platform brings the same AI-native monitoring capabilities that power enterprise infrastructure observability to your data pipelines. The platform's anomaly detection engine continuously profiles data flowing through your pipelines, catching distribution shifts, volume anomalies, and freshness violations before they reach training jobs.

With unified dashboards that correlate infrastructure health with data pipeline performance, ML teams get a single view of both the systems running their pipelines and the data flowing through them. When a data quality issue stems from an infrastructure problem — a slow database, a network bottleneck, a failed cloud service — Motadata's root cause analysis traces from data anomaly to infrastructure root cause in seconds.

For organizations building AI/ML capabilities, Motadata provides the observability foundation that turns data quality from a recurring crisis into a managed, measurable practice.

Explore Motadata's observability platform | Request a demo

FAQ: Observability and AI/ML Data Quality

Q: What is data observability for AI/ML?

A: Data observability applies real-time monitoring, anomaly detection, and lineage tracking to data pipelines that feed ML models. It continuously checks data freshness, volume, schema compliance, and statistical distributions to catch quality issues before they corrupt model training.

Q: How does observability improve AI model accuracy?

A: Observability catches data quality issues — missing values, distribution shifts, stale data, schema breaks — before they reach training pipelines. By ensuring training data is accurate, complete, and representative, observability directly improves the quality of the patterns ML models learn.

Q: What is data drift and why does it matter for ML?

A: Data drift occurs when the statistical distribution of input data changes over time, causing model predictions to degrade. Observability platforms detect drift by continuously comparing live data distributions against training baselines, alerting teams when retraining is needed.

Q: How do you implement data observability for ML pipelines?

A: Start by mapping your critical data pipelines, establishing baselines from historical data, instrumenting key pipeline stages with monitoring checkpoints, setting up severity-based alerting, and integrating with your ML workflow tooling to automatically hold training jobs when issues are detected.

Q: Can data observability work with both real-time and batch ML systems?

A: Yes. For batch training pipelines, observability runs profile checks between scheduled jobs. For real-time inference systems, it monitors streaming data with matching latency. The monitoring cadence should always match the data cadence of your ML system.

Author

Arpit Sharma

Senior Content Marketer

Arpit Sharma is a Senior Content Marketer at Motadata with over 8 years of experience in content writing. Specializing in telecom, fintech, AIOps, and ServiceOps, Arpit crafts insightful and engaging content that resonates with industry professionals. Beyond his professional expertise, he is an avid reader, enjoys running, and loves exploring new places.