What is the Mean Time to Resolution (MTTR)? Why It Matters and How to Resolve

Jagdish Sajnani

How quickly can you restore service when an incident hits your system?

Most IT teams are not slowed down by detecting incidents. The challenge starts after something breaks, when the goal is to bring services back online as quickly as possible.

Modern systems are highly distributed. Alerts arrive from multiple tools, dependencies are complex, and it is often difficult to immediately understand what actually failed.

A single incident can trigger a cascade of notifications across dashboards, teams, and monitoring systems. Even when detection is fast, resolution often becomes slower.

Mean Time to Resolution (MTTR) is the metric that captures this delay. It measures the total time taken to restore normal service after an incident occurs.

According to industry incident response studies, even mature IT organizations still lose significant time in triage, context gathering, and escalation rather than actual fix execution. As a result, MTTR reflects not just engineering speed but overall operational clarity.

In this guide, you will understand what MTTR really measures, why it stays high in most environments, and how modern IT teams reduce it using observability, automation, and AIOps-driven workflows.

What MTTR Actually Measures in Modern IT Operations

Mean Time to Resolution (MTTR) measures how long it takes to restore a service after an incident is detected, investigated, and confirmed as resolved.

It is one of the most widely used metrics in IT operations, NOC environments, and SRE teams because it directly reflects operational efficiency during failures. However, most teams measure it without fully understanding what it includes in real production systems.

In practice, MTTR is not just about how fast engineers fix something. It reflects how quickly an organization can move from detection to full-service restoration with confidence that the issue is truly resolved.

Mean Time to Resolution formula

MTTR = Total Resolution Time / Number of Incidents

This formula looks simple, but real-world interpretation is where most teams go wrong.

Resolution time is not a quick fix, but includes multiple operational stages:

Incident detection or reporting

Initial triage and classification

Investigation and root cause analysis

Fix implementation or workaround

Validation and service restoration confirmation

Incident closure in ITSM systems

Each of these steps adds delay, and in most enterprise environments, the majority of MTTR is consumed before the actual fix even begins.

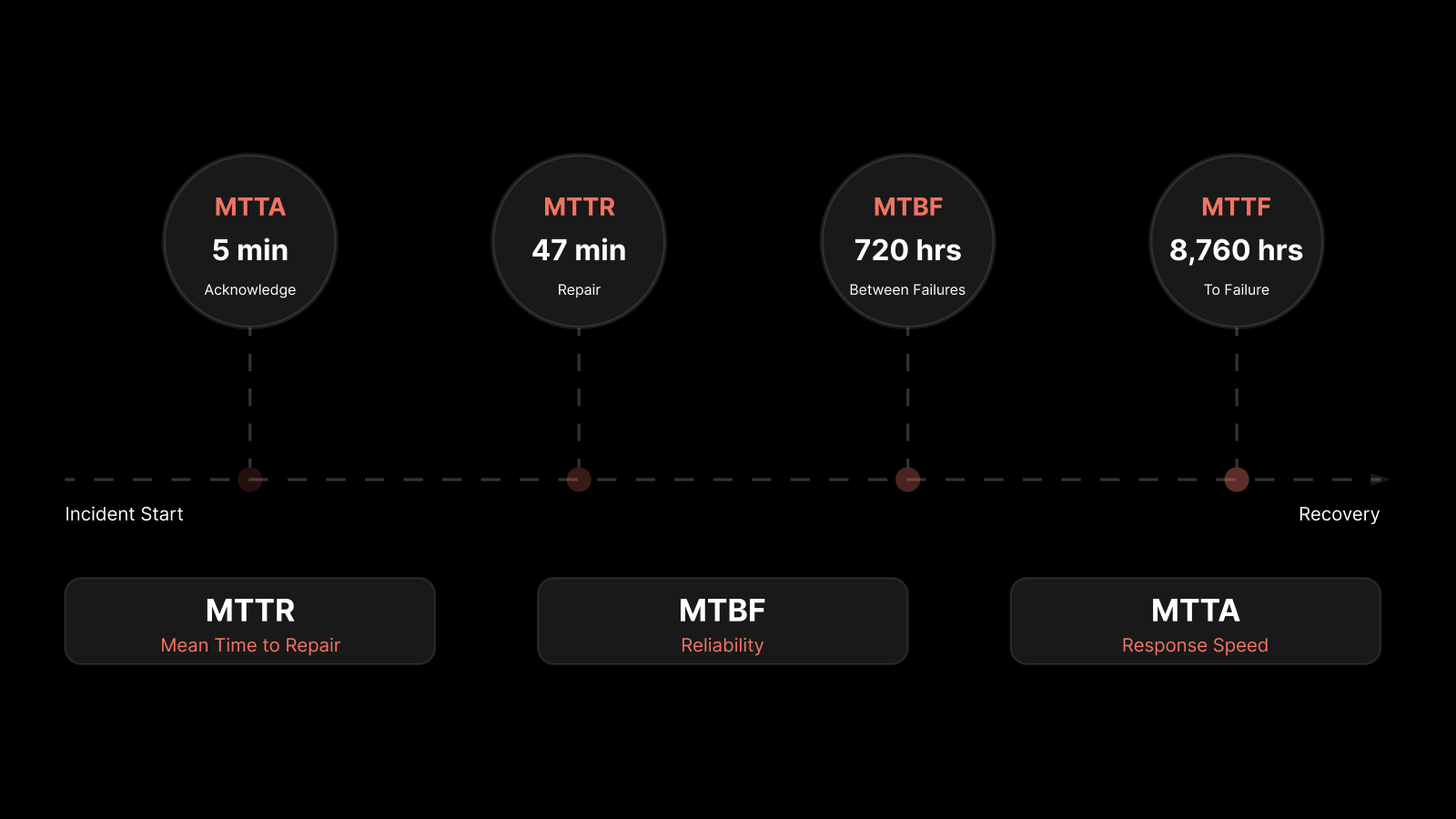

MTTR vs MTTD vs MTTF vs Repair and Recovery Metrics

MTTR is often misunderstood because it overlaps with several related operational metrics.

MTTD (Mean Time to Detect) measures how long it takes to identify that something is wrong. This influences MTTR because late detection automatically increases total resolution time.

MTTF (Mean Time to Failure) measures system reliability, not incident response. It tells you how often failures occur.

MTTR itself can also be interpreted in different ways depending on context:

Repair time: Time spent actively fixing the issue

Recovery time: Time taken to fully restore service after impact

Resolution time: End-to-end lifecycle from detection to closure

In modern ITSM and SRE environments, MTTR is usually treated as full lifecycle resolution time.

However, benchmarking without aligning definitions leads to incorrect comparisons across teams, vendors, and industries.

Why MTTR Averages Are Misleading in IT Environments

Most organizations report MTTR as a single average number. This creates a false sense of operational stability.

The problem is that incident distribution is not uniform. A typical enterprise environment includes:

Many small incidents that resolve quickly

A few high-severity incidents that take hours or days

Repeated recurring issues that inflate long-tail metrics

When these are averaged together, the operational pain disappears.

For example:

20 incidents resolve in 10–15 minutes

2 incidents take 6–8 hours

Reported MTTR may still look “healthy” at under 1 hour

But the customer experience is dominated by long-tail incidents. This is why mature SRE and NOC teams prioritize:

Median MTTR

90th percentile MTTR

MTTR segmented by severity (P1, P2, P3)

These metrics reveal operational reality far better than a single average value.

Why MTTR matters more in 2026 than before

Modern IT environments are fundamentally more complex than traditional infrastructure.

A single incident can now span:

Cloud infrastructure layers

Microservices and distributed systems

API gateways and service meshes

Identity providers and IAM systems

Observability and logging pipelines

This means resolution is no longer a linear process. It is a cross-system coordination problem.

As a result, MTTR has become a system maturity metric. Additionally, it reflects:

How well your tools are integrated

How quickly context can be gathered

How automated your response workflows are

How clearly ownership is defined across systems

This is why MTTR is now closely tied to DORA metrics and modern SRE performance models.

A High MTTR is a signal of architectural and tooling fragmentation.

Why MTTR Is Still High in Most IT Teams (Even with Modern Tools)

Most IT teams today do not struggle because they lack tools. They struggle because their tools are not working together in a meaningful way.

Even in mature environments with observability platforms, ITSM systems, and cloud monitoring, MTTR remains high. The reason is simple. Incident resolution is still driven by fragmented context, manual investigation, and slow coordination between systems.

MTTR is no longer a visibility problem alone. It is a coordination and correlation problem across tools, teams, and data sources.

1. Alert Overload and Fragmented Monitoring

Modern IT environments generate an extremely high volume of alerts across infrastructure, applications, cloud services, and security layers.

Each tool is designed to detect issues independently. But when everything is important, nothing becomes actionable.

In real environments, this creates three operational problems:

Engineers receive too many alerts per incident

Multiple tools report the same issue differently

Noise hides the actual root cause signal

This leads to alert fatigue, which directly increases MTTR.

Instead of moving toward resolution, teams spend time filtering, deduplicating, and validating alerts. In many cases, the actual incident is clear only after multiple dashboards are checked and correlated manually.

This is why modern MTTR reduction starts with alert correlation, not alert generation.

2. Lack of Context Across Tools and Teams

Most enterprise environments are still structured around functional silos:

Network operations teams

Application teams

Infrastructure teams

Cloud operations teams

Each team owns its own monitoring stack. Each stack produces its own alerts. Each dashboard tells only part of the story.

When an incident spans multiple layers, no single team has complete visibility.

For example:

Network team sees latency spikes

Application team sees service degradation

Cloud team sees resource saturation

Individually, none of these signals explain the full incident. Together, they form the root cause.

But without unified context, resolution becomes a coordination exercise rather than a technical fix.

This is one of the most underestimated drivers of MTTR inflation in enterprise IT environments.

3. Manual Triage Slows Everything Down

Even in environments with strong observability, triage is still largely manual.

A typical incident workflow looks like this:

Review incoming alerts

Open multiple dashboards

Check logs across systems

Compare recent deployments or changes

Form a hypothesis

Validate through further investigation

Escalate if unclear

This process repeats for every incident, even when patterns are known.

The issue is not lack of skill. It is a lack of automation in the decision-making layer.

Manual triage becomes especially expensive in:

High-frequency incident environments

Multi-service architectures

Microservices-based systems

Every additional system increases the time required to understand impact and isolate root cause.

This is why organizations with similar toolsets often have very different MTTR outcomes. The difference is how much of triage is automated versus manual.

4. Missing Dependency Visibility Across Systems

One of the most critical but often ignored causes of high MTTR is missing dependency mapping.

In complex IT environments, services are deeply interconnected:

Applications depend on APIs

APIs depend on databases

Databases depend on storage and compute layers

Identity systems control access across all of them

When dependency relationships are unclear, incident resolution slows down significantly.

Engineers are forced to answer basic questions during an incident:

Which service is impacted?

What is the upstream cause?

Who owns this component?

What other systems are affected?

Without a reliable Configuration Management Database (CMDB) or live dependency mapping, this becomes a manual discovery process during every incident.

This directly increases both triage time and escalation time, which are two of the largest contributors to MTTR.

5. No Automation Between Detection and Resolution

Most organizations still operate in a reactive model:

Monitoring detects an issue

Alerts are generated

Humans investigate and resolve

This model does not scale with modern cloud environments.

A significant portion of incidents in production systems are repetitive or known issues. These do not require full investigation cycles every time.

Without automation:

Known incidents are treated as new incidents

Engineers repeatedly perform the same resolution steps

Response time increases linearly with incident volume

This is where MTTR automation becomes critical.

Modern AIOps-driven environments reduce MTTR by:

Automatically correlating alerts into incidents

Suggesting probable root causes

Triggering predefined remediation workflows

Executing runbooks for known failure patterns

The absence of this automation layer is one of the biggest gaps between tool-rich and outcome-effective IT teams.

MTTR Benchmarks: What Good Actually Looks Like in 2026

You cannot improve Mean Time to Resolution (MTTR) without knowing what “good” looks like for your environment.

Many teams set MTTR targets based on internal expectations rather than industry benchmarks. This often leads to either unrealistic goals or a false sense of performance.

In reality, MTTR varies based on system criticality, business impact, and operational maturity.

The key is not to chase a single number. It is to understand what level of performance your systems and users actually require.

1. MTTR Benchmarks by Incident Severity

Not all incidents are equal, and MTTR should never be measured as a single blended number.

High-performing IT and SRE teams define resolution targets based on severity levels:

P1 (Critical incidents): These impact core business services or customer-facing systems. Leading organizations target resolution within 30 to 60 minutes.

P2 (High severity): These affect important services but may have partial workarounds. Typical MTTR ranges between 1 to 4 hours.

P3 (Medium severity): These are limited-impact issues or internal system disruptions. Most teams resolve them within the same business day.

P4 (Low severity): These include minor issues or non-urgent requests. Resolution timelines typically range from 1 to 3 business days.

This severity-based approach ensures that engineering effort aligns with business impact. It also helps maintain MTTR SLA compliance without overwhelming on-call teams.

2. MTTR Benchmarks Across Industries

MTTR expectations also vary significantly by industry due to differences in risk, regulation, and revenue impact.

Financial services: Systems are highly sensitive to downtime. Leading organizations target sub-30-minute MTTR for critical incidents due to direct financial and regulatory impact.

Healthcare: Resolution time depends on system type. Clinical systems demand rapid recovery, while back-office systems allow more flexibility. Compliance requirements still enforce tight SLAs.

E-commerce and digital platforms: Downtime directly affects revenue. During peak periods such as sales events, MTTR targets are often reduced by half to minimize business loss.

SaaS and cloud-native companies: These organizations operate under strict uptime commitments, often 99.9% or higher. This requires consistently low MTTR, especially for customer-facing services.

Understanding your industry context helps you set realistic and competitive MTTR targets.

3. MTTR in the DORA Metrics Framework

MTTR is a core metric in the DORA (DevOps Research and Assessment) framework, where it is defined as “time to restore service.”

DORA categorizes organizations into performance tiers based on their operational metrics:

Elite performers: Restore service in less than 1 hour

High performers: Typically resolve incidents within a few hours to one day

Medium performers: Resolution time ranges from one day to one week

Low performers: May take several days to weeks to restore service

This framework is widely used because it connects MTTR directly to engineering maturity and operational efficiency.

Organizations that consistently achieve low MTTR also tend to perform better in deployment frequency, change failure rate, and overall system reliability.

The MTTR Equation Nobody Talks About (Beyond the Formula)

Most teams treat Mean Time to Resolution (MTTR) as a single metric. In reality, MTTR is not one number. It is the combined result of multiple stages that occur across the full incident lifecycle.

The standard formula only gives an average. It does not explain where time is actually being spent or what is slowing down recovery. That is why MTTR can look acceptable on dashboards while real operational delays still exist.

To improve MTTR in a meaningful way, you need to break it into its underlying components and understand where friction is introduced.

MTTR is a Combination of Multiple Time Layers

Every incident moves through a sequence of stages before it is fully resolved. These stages are often not visible in standard reporting, but they define the actual resolution experience.

A typical MTTR breakdown includes:

Detection time (MTTD): Time taken to identify that an issue exists

Triage time: Time spent reviewing alerts, validating impact, and defining scope

Diagnosis time: Time required to identify the root cause of the issue

Resolution time (fix): Time taken to apply a fix or implement a workaround

Verification time: Time required to confirm that the service is fully restored and stable

In theory, these stages appear sequential. In practice, they are often overlapping, revisited multiple times, or delayed due to missing context and unclear ownership.

This is why MTTR can vary significantly even when incident types look similar.

Where Most MTTR Time is Actually Lost

Many teams assume that slow resolution is the main driver of high MTTR. In most environments, that is not true.

The majority of time is typically spent before the fix even begins.

In modern IT systems:

Detection is usually fast due to monitoring tools

Many fixes are known, documented, or repeatable

The real delay happens during triage and diagnosis

This happens because:

Alerts lack sufficient context

Data is distributed across multiple disconnected tools

Engineers must manually correlate signals

Ownership of services is not immediately clear

As a result, teams spend more time understanding the incident than resolving it.

This is also why simply adding more monitoring tools does not improve MTTR. It often increases noise without improving clarity.

Why triage time dominates in distributed systems

In traditional infrastructure, incidents were more isolated, and root cause analysis was relatively straightforward.

In modern cloud and microservices environments, that is no longer the case.

Today:

Services are tightly interconnected

Infrastructure is dynamic and elastic

Failures propagate across multiple layers

A single underlying issue can create multiple symptoms across different systems.

For example:

A database slowdown may appear as application latency

A network degradation may surface as API failures

A configuration change may impact several dependent services

Without proper correlation, these signals look like separate incidents.

Triage then becomes the process of connecting fragmented signals into a single coherent root cause. This is where most MTTR time is consumed.

Why resolution is no longer the main bottleneck

In many environments, the actual fix is not the hardest part of incident management.

Most common incidents already have established solutions:

Restarting services

Rolling back deployments

Scaling infrastructure

Clearing resource bottlenecks

Once the issue is clearly understood, these actions are often quick to execute.

The real challenge is reaching that level of clarity with confidence.

This is why improving only execution speed does not significantly reduce MTTR. The bottleneck is earlier in the lifecycle, not at the point of remediation.

The hidden impact of verification delays

MTTR does not end when the fix is applied. A significant portion of time is also spent on validation and closure.

After remediation, teams still need to:

Confirm system stability

Verify that dependent services are unaffected

Ensure the issue does not reoccur

Close the incident formally in ITSM systems

In complex environments, this verification step can take longer than expected, especially when visibility is limited across systems.

Incomplete verification also creates additional risk, including incident reopening, which further inflates MTTR.

Why MTTR improvement requires system-level thinking

Because MTTR spans multiple stages, improving it requires changes across the entire incident lifecycle.

Focusing on a single stage rarely produces meaningful improvement.

For example:

Faster detection without better triage still leads to delays

Faster fixes without proper diagnosis can result in repeat incidents

Better tools without integration still create context gaps

This is why high-performing IT and SRE teams treat MTTR as a system-level optimization problem, not a single metric improvement exercise.

They focus on:

Reducing uncertainty during triage

Improving visibility across systems

Automating repetitive decision points

Unifying data across tools for faster context building

MTTR Reduction Framework: From Manual Ops to Automated Recovery

Reducing Mean Time to Resolution (MTTR) is not about working faster during incidents. It is about removing the friction that slows teams down at each stage of the incident lifecycle.

Most delays in MTTR come from lack of context, manual triage, and disconnected tools. High-performing IT teams solve this by building systems that reduce decision time before action is taken.

The shift is clear. Teams move from reactive operations to structured, automated, and context-driven incident response.

Step 1: Build Full-stack Observability Across Your Environment

You cannot reduce MTTR if your team cannot see what is happening across systems.

Most environments still rely on separate tools for:

Infrastructure monitoring

Network visibility

This creates blind spots during incidents. Engineers need to switch between tools to understand what is happening.

Full-stack observability brings together:

Metrics (performance data)

Logs (event records)

Traces (request flow across services)

Events (state changes and alerts)

When these signals are unified, teams gain a complete view of system behavior. This reduces the time required to identify where the issue is occurring.

Without this visibility, MTTR improvements are limited, regardless of team skill.

Step 2: Reduce Alert Noise Using AI-Powered Correlation

Alert volume is one of the biggest blockers to fast incident resolution.

In most environments, a single issue can trigger multiple alerts across different tools. Without correlation, each alert is treated as a separate problem.

AI-powered correlation changes this by:

Grouping related alerts into a single incident

Identifying likely root cause signals

Suppressing duplicate or low-value alerts

Enriching incidents with context

This reduces the alert-to-incident ratio significantly.

Instead of analyzing dozens of alerts, engineers work on a single, enriched incident. This directly reduces triage time, which is the largest component of MTTR.

Step 3: Standardize incident runbooks for repeatable resolution

A large percentage of incidents in IT environments are recurring.

However, many teams still handle them manually every time. This leads to inconsistent resolution times and unnecessary delays.

Runbooks solve this by defining:

What the incident looks like

What steps to take first

How to resolve the issue

When to escalate

How to verify resolution

Well-defined runbooks remove guesswork during incidents.

They also allow less experienced engineers to handle incidents effectively, reducing dependency on senior team members.

Step 4: Automate First-Response and Known-Fix Workflows

Once runbooks are established, the next step is automation.

Many incidents have predictable resolution paths. These can be automated to eliminate manual effort.

Examples include:

Restarting failed services

Scaling infrastructure automatically

Clearing queues or temporary files

Rolling back faulty deployments

Automation reduces MTTR by removing the need for human intervention in known scenarios.

This is especially important in high-volume environments, where manual handling does not scale.

Over time, organizations can expand automation coverage to handle more complex scenarios.

Step 5: Add Dependency Mapping and Ownership Context

During an incident, one of the biggest delays comes from identifying:

What is affected

What caused the issue

Who is responsible for fixing it

Dependency mapping solves this problem.

A well-maintained system provides:

Relationships between services and infrastructure

Impact visibility across business services

Clear ownership of components

Change history and recent updates

This allows teams to move directly to the right point of action instead of discovering it during the incident.

Step 6: Implement Multi-Level Alerting Based on SLOs

Not every issue requires the same level of urgency.

Many teams overload on-call engineers by treating all alerts equally critical. This increases noise and slows response to real incidents.

Service Level Objective (SLO)-based alerting introduces structure:

Notify: Early warning signals for non-critical issues

Ticket: Issues that require attention but not immediate action

Page: Critical incidents that need immediate response

This ensures that attention is focused on where it matters most.

It also improves MTTR SLA compliance by aligning response urgency with business impact.

Step 7: Close the Loop with Post-Incident Learning

Every incident is an opportunity to improve future MTTR.

High-performing teams do not stop resolution. They analyze:

What delayed detection

What slowed triage

What made diagnosis difficult

Whether automation could have helped

This feedback is then used to:

Improve runbooks

Add automation

Refine alerting

Enhance observability coverage

Over time, this creates a compounding effect where each incident becomes easier and faster to resolve.

How Different Tools Reduce MTTR at Each Stage

Reducing Mean Time to Resolution (MTTR) is not driven by a single tool. It depends on how well different systems work together across detection, investigation, coordination, and resolution.

Most organizations already have the right categories of tools in place. The gap is about how effectively each layer contributes to faster incident resolution.

Each tool type addresses a specific MTTR bottleneck. When these layers operate in isolation, MTTR stays high. When they are connected, resolution becomes faster and more predictable.

1. AI-powered observability platforms

AI-powered observability platforms form the foundation of fast incident detection and investigation.

They provide:

Unified visibility across infrastructure, applications, and services

Real-time telemetry from metrics, logs, and traces

AI-driven correlation of signals across systems

Early detection of anomalies and performance deviations

Unlike traditional monitoring, these platforms do not just show system health. They help teams understand relationships between signals and identify likely root causes faster.

This directly reduces:

Detection time

Triage time

Initial diagnosis effort

By correlating data across layers, they eliminate the need to manually piece together fragmented signals during an incident.

2. ITSM Platforms

IT Service Management (ITSM) platforms structure how incidents are managed once they are detected.

They provide:

Standardized incident workflows

Ticket creation, routing, and escalation

Communication and ownership management

ITSM systems ensure that incidents move through a controlled lifecycle instead of being handled in an ad hoc manner.

This helps reduce delays in:

Escalation

Coordination

SLA breaches

However, ITSM platforms do not reduce MTTR on their own. Their impact depends heavily on the quality of inputs from observability and AI-driven systems.

3. Automation Platforms

Automation platforms focus on reducing manual effort during incident resolution.

They enable:

Self-healing workflows

Automated runbook execution

Predefined remediation actions

Integration with alerting and ITSM systems

These platforms are especially effective for known and repeatable incident types.

By removing manual intervention from common resolution steps, they directly reduce:

Resolution time

On-call workload

Repetitive operational effort

Automation becomes increasingly important as environments scale and incident volume grows.

4. CMDB and Discovery tools

CMDB (Configuration Management Database) and discovery tools provide structural context for every incident.

They help teams understand:

What services and systems are affected

How components depend on each other

Who owns each asset or service

What recent changes may have contributed to the issue

This context is critical during triage and escalation.

Without it, teams spend valuable time identifying ownership and impact instead of focusing on resolution.

By providing dependency visibility, CMDB tools reduce:

Triage time

Escalation delays

Impact analysis effort

How to Measure MTTR Correctly [Implementation Checklist]

Let’s understand how MTTR is measured, what points to take care of, and its complete checklist.

1. Define your MTTR start and end events clearly

Establish a consistent rule for when MTTR begins and ends.

For most IT and SRE teams, MTTR should start at incident detection or alert creation and end only when the service is fully restored and verified.

Without a fixed definition, MTTR data cannot be compared across incidents or teams.

2. Separate MTTR by severity levels (P1, P2, P3, P4)

Do not rely on a single aggregated MTTR value. Each severity level has a different impact, urgency, and resolution expectations.

Breaking MTTR down by severity helps identify where delays actually occur and prevents critical incidents from being hidden on averages.

3. Track Median MTTR Apart from Mean MTTR

Average values can be misleading in environments with uneven incident distribution. A small number of long-running incidents can distort the overall metric.

Median MTTR provides a more realistic view of typical resolution performance, especially in high-volume environments.

4. Review MTTR in Every Post-incident Review (PIR)

MTTR should not be treated as a static KPI. Every incident review should analyze where time was lost across detection, triage, diagnosis, and resolution.

This helps identify recurring bottlenecks and improves future response efficiency.

Conclusion

Mean Time to Resolution is more than an operational metric. It is a reflection of how well your systems, tools, and teams work together under pressure.

High MTTR is rarely caused by a single issue. It usually comes from a combination of fragmented visibility, manual triage, unclear ownership, and lack of automation.

The most effective way to reduce MTTR is not to focus on speed alone, but to remove friction across the entire incident lifecycle. This includes improving observability, reducing alert noise, standardizing response workflows, and introducing automation where possible.

When organizations approach MTTR as a system-level problem rather than a reporting metric, resolution times naturally improve, and incident handling becomes more predictable and controlled.

FAQs

What is Mean Time to Resolution (MTTR)?

Mean Time to Resolution (MTTR) is the average time taken to fully resolve an incident, from detection to service restoration and verification. It is commonly used in IT operations, NOC, and SRE environments to measure incident response efficiency.

What is the MTTR formula?

MTTR is calculated using the formula:

MTTR = Total Resolution Time / Number of Incidents

It represents the average time required to restore service across multiple incidents.

What is the difference between MTTR, MTTD, and MTTF?

MTTR (Mean Time to Resolution): Time to fully resolve an incident

MTTD (Mean Time to Detect): Time taken to identify an incident

MTTF (Mean Time to Failure): Time between system failures

MTTR focuses on recovery, while MTTD focuses on detection, and MTTF focuses on reliability.

What is a good MTTR benchmark?

Good MTTR depends on severity and industry. For critical (P1) incidents, leading organizations aim for 30 to 60 minutes. Lower severity incidents may take several hours or days depending on complexity and impact.

How can MTTR be reduced in IT operations?

MTTR can be reduced by improving observability, reducing alert noise, standardizing runbooks, implementing automation, and using AIOps for alert correlation and faster root cause analysis.

How can MTTR be reduced in IT operations?

MTTR can be reduced by improving observability, reducing alert noise, standardizing runbooks, implementing automation, and using AIOps for alert correlation and faster root cause analysis.

Why is MTTR important in SRE and ITSM?

MTTR is a key indicator of operational efficiency. It directly impacts system availability, customer experience, and SLA compliance. In SRE and ITSM practices, lower MTTR reflects faster recovery and more resilient systems.

Author

Jagdish Sajnani

Senior Content Strategist

Jagdish Sajnani is a B2B SaaS content strategist and writer. He has experience across different B2B verticals, including enterprise technology domains such as IT Service Management, AI-driven automation, observability, and IT operations. He specializes in translating complex technical systems into structured, engaging, and search-optimized content. His work improves product understanding, strengthens organic visibility, and supports B2B demand generation.