What Is Kubernetes Monitoring?

Arpit Sharma

Kubernetes monitoring is the practice of collecting, analyzing, and acting on metrics, logs, and traces from every layer of a Kubernetes environment — from cluster nodes and control plane components to individual pods, containers, and the applications running inside them.

Modern cloud-native applications rely on Kubernetes as their primary container orchestration platform. In 2026, Kubernetes runs critical enterprise workloads across financial services, healthcare, e-commerce, and virtually every industry that's adopted microservices architecture.

But orchestrating thousands of short-lived containers across hybrid and multi-cloud environments creates visibility challenges that traditional monitoring tools weren't designed to handle. Containers spin up and terminate in seconds. Services communicate across dynamic network topologies. Resource allocation shifts constantly based on demand.

Without comprehensive monitoring, you're operating blind in an environment where performance issues, security threats, and resource waste can escalate within minutes.

Core Components of Kubernetes Monitoring

Node Monitoring

Nodes are the physical or virtual machines that run your Kubernetes workloads. Node-level monitoring provides the foundation for understanding cluster health.

Resource utilization: Real-time tracking of CPU, memory, disk I/O, and network usage at the node level reveals capacity constraints before they impact workloads

Health and availability: Continuous health checks detect node failures, resource exhaustion, and hardware degradation

Predictive scaling: AI-driven analysis of historical utilization trends enables proactive node provisioning — scaling up before demand spikes hit, not after

Kubelet metrics: Advanced kubelet instrumentation exposes granular details about pod scheduling, container runtime performance, and volume operations

Pod and Container Monitoring

Pods and containers are the fundamental execution units in Kubernetes. Their ephemeral nature makes monitoring both critical and challenging.

Resource efficiency: Per-container CPU and memory tracking highlights inefficiencies, right-sizing opportunities, and resource contention between co-located containers

Lifecycle events: Tracking restarts, crashes, evictions, and OOMKills reveals stability issues that aggregate metrics might mask

Log aggregation: Centralized, real-time log collection with AI-powered noise reduction surfaces meaningful events from the high volume of container output

Distributed tracing: Request-level tracing across containers within a pod provides deep insight into latency sources and dependency chains

Control Plane Monitoring

The control plane — API server, etcd, scheduler, and controller manager — is the brain of your Kubernetes cluster. If it degrades, everything degrades.

API server health: Request latency, throughput, and error rates indicate whether the API server can handle your cluster's operational load

etcd performance: As the cluster's state store, etcd latency and storage metrics directly affect scheduling, configuration, and recovery capabilities

Anomaly alerting: Proactive alerts on control plane deviations catch problems before they cascade into workload-affecting outages

Application Monitoring

Infrastructure metrics alone don't tell you whether your applications are performing well. Application-level monitoring connects infrastructure health to user experience.

APM integration: Application performance monitoring tools that integrate natively with Kubernetes provide context-rich views of service behavior within the cluster

Transaction tracing: Distributed tracing across microservices enables precise identification of latency bottlenecks and failure points

Business metrics: Latency, error rates, throughput, and custom business KPIs visualized within the Kubernetes context tie infrastructure performance to outcomes that matter

Service mesh telemetry: Platforms like Istio and Linkerd add observability into service-to-service communication without requiring code changes

Network Monitoring

Kubernetes networking is dynamic and complex. Monitoring it requires visibility into traffic flows, policy enforcement, and service communication.

Cluster traffic analysis: Visibility into internal pod-to-pod, pod-to-service, and cross-cluster communication patterns

Network policy validation: Continuous monitoring of network policy enforcement verifies that security boundaries are working as intended

Service mesh data: Service-to-service communication paths analyzed through mesh telemetry reveal dependency relationships and failure propagation

Anomaly detection: Real-time identification of latency spikes, dropped packets, and routing irregularities that could indicate misconfiguration or attack

Essential Tools and Technologies

Prometheus and Grafana

Prometheus remains the standard for Kubernetes metrics collection, and Grafana provides the visualization layer. Together they offer rich query capabilities, a growing library of community dashboards, and seamless integration with Kubernetes components and third-party exporters.

eBPF-Based Observability

Extended Berkeley Packet Filter (eBPF) technology enables kernel-level data collection with minimal performance overhead. It's become essential for security monitoring, network observability, and performance profiling in production Kubernetes environments. Tools like Cilium and Pixie leverage eBPF for low-latency, high-resolution observability that traditional agents can't match.

Service Mesh Monitoring

Service meshes like Istio and Linkerd provide built-in telemetry — metrics, logs, and traces — for service-to-service communication without requiring application code changes. They enable monitoring of traffic routing, mTLS encryption, retry behavior, and circuit breaking.

AI-Powered Anomaly Detection

Machine learning models identify outliers and trends across logs, metrics, and traces that rule-based alerting would miss. Automated correlation reduces alert noise, while proactive recommendations optimize resource usage and detect performance regressions before users notice.

Best Practices for Kubernetes Monitoring

Build a Full Observability Strategy

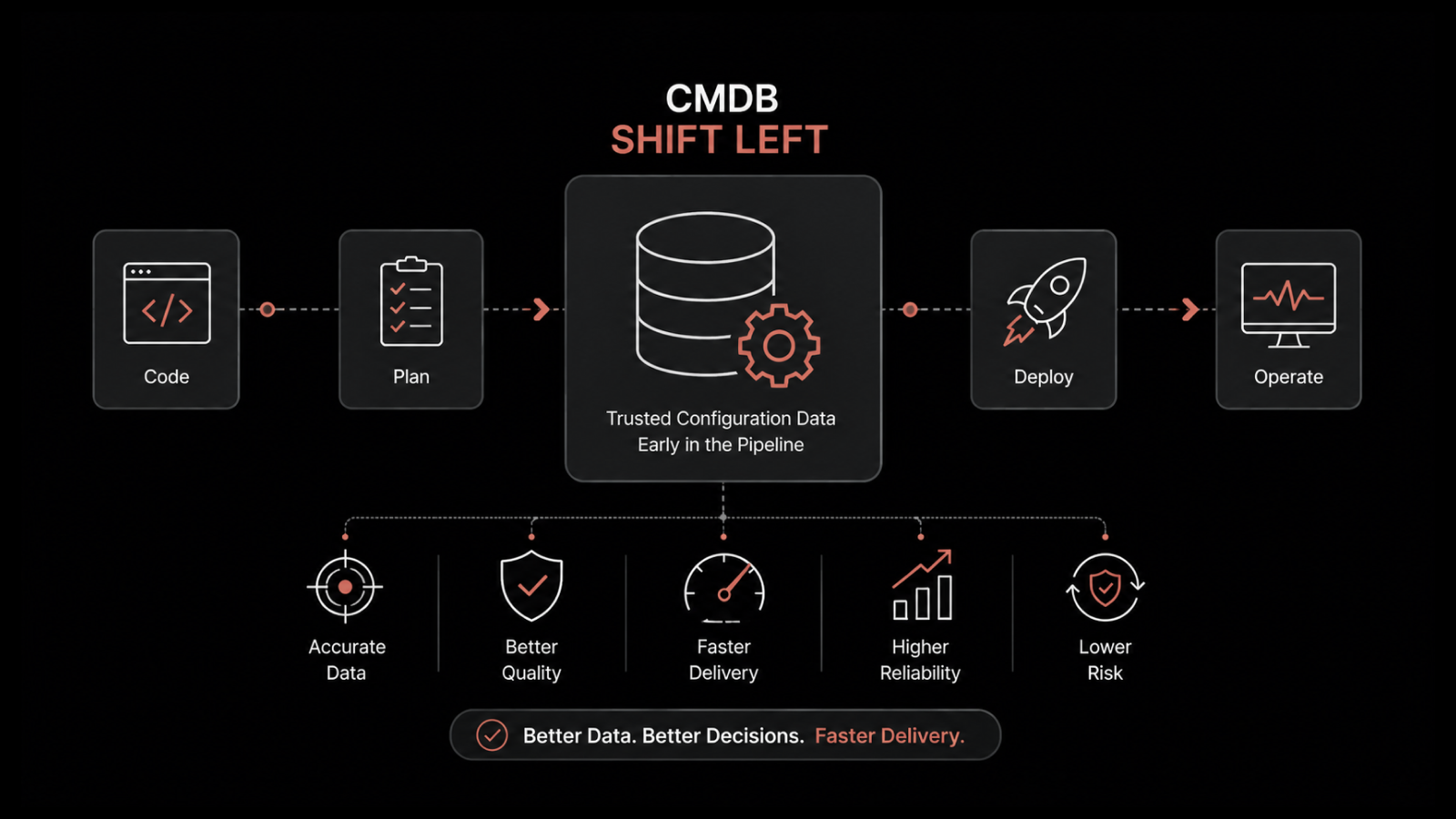

Monitoring CPU and memory isn't enough. A comprehensive observability strategy integrates three pillars: metrics, logs, and distributed traces. Together, they answer both "what happened?" and "why did it happen?"

Add event tracking for Kubernetes-native events (deployments, scaling operations, configuration changes) to provide the context that makes metrics and logs actionable.

Implement Context-Aware Alerting

Not all alerts deserve the same response. Context-aware alerting systems evaluate the relationship between symptoms and their actual impact — a high CPU alert on an idle node doesn't warrant the same response as high CPU on a node running production workloads.

AI-driven alerting examines cluster topology, application dependencies, and historical patterns to generate relevant notifications and reduce alert fatigue.

Centralize Log Management

As clusters scale, log management complexity grows exponentially. Centralize log aggregation across clusters and namespaces to enable efficient indexing, searching, and analysis. AI-powered filtering surfaces actionable insights and reduces mean time to resolution (MTTR).

Design Role-Specific Dashboards

DevOps engineers, developers, and product managers need different views of the same data. Build dashboard hierarchies that present the right metrics and visual indicators to each stakeholder group, enabling them to track cluster health within the scope of their responsibilities.

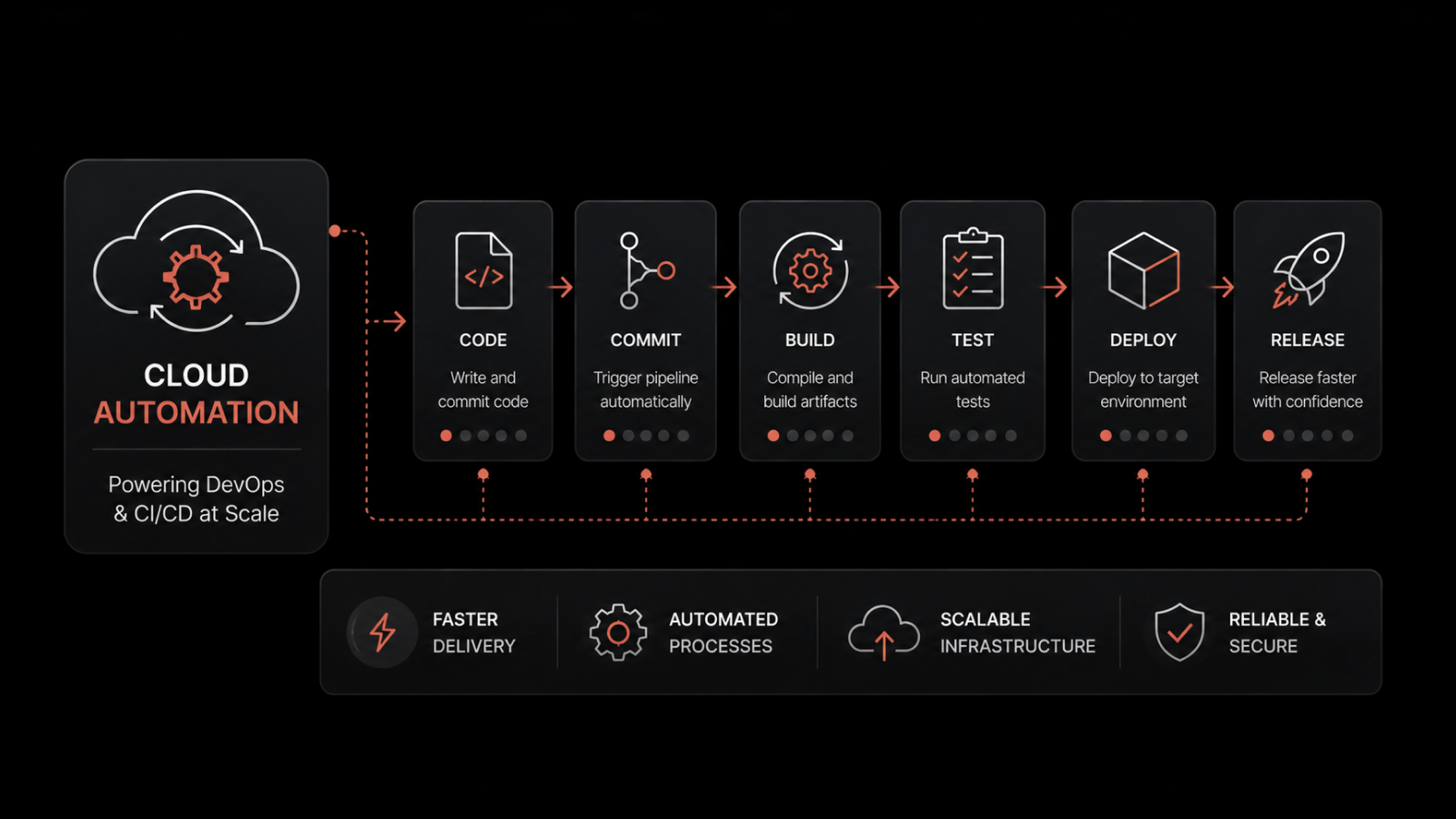

Integrate Monitoring Into CI/CD Pipelines

Shift observability left by embedding health checks, performance benchmarks, and anomaly detection into your CI/CD pipelines. Catching regressions in staging prevents them from reaching production — where remediation is ten times more expensive.

Implement RBAC for Monitoring Data

Not everyone needs access to all observability data. Sensitive metrics, logs, and traces require access controls. Role-based access control (RBAC) for monitoring dashboards and APIs ensures compliance while limiting data exposure to personnel who need it.

Optimize Monitoring Costs

Storing every metric at full resolution indefinitely isn't sustainable. Balance data granularity against cost with tiered storage, appropriate retention policies, and aggregation techniques that reduce ingestion volumes without sacrificing the insights you need for troubleshooting and capacity planning.

The Future of Kubernetes Monitoring

AI-Driven Autonomous Operations

Expect observability platforms to move beyond detection into autonomous remediation — identifying issues, determining root cause, and executing fixes without human intervention for well-understood failure patterns.

Security and Observability Convergence

Monitoring and security tooling are merging. Unified platforms that correlate performance data with security signals enable faster threat detection and more informed incident response.

eBPF Adoption Expansion

eBPF will become the default instrumentation layer for Kubernetes environments, providing unprecedented kernel-level visibility into system calls, network flows, and process behavior.

OpenTelemetry Standardization

OpenTelemetry is becoming the vendor-neutral standard for instrumentation. Its adoption reduces lock-in, simplifies multi-tool environments, and ensures consistent telemetry across heterogeneous infrastructure.

People Also Ask

What should you monitor in a Kubernetes cluster?

Monitor five layers: node resources (CPU, memory, disk, network), pod and container health (restarts, crashes, resource usage), control plane components (API server, etcd), application performance (latency, errors, throughput), and network traffic (policy enforcement, service communication).

What's the difference between Kubernetes monitoring and observability?

Monitoring tracks predefined metrics and alerts when thresholds are crossed. Observability goes further — it combines metrics, logs, and traces to let you investigate unknown problems and understand system behavior without having to predict every failure mode in advance.

Is Prometheus enough for Kubernetes monitoring?

Prometheus excels at metrics collection but covers only one pillar of observability. A complete strategy also requires centralized logging, distributed tracing, and AI-powered analysis. Prometheus is a strong foundation, but most production environments need additional tools for full coverage.

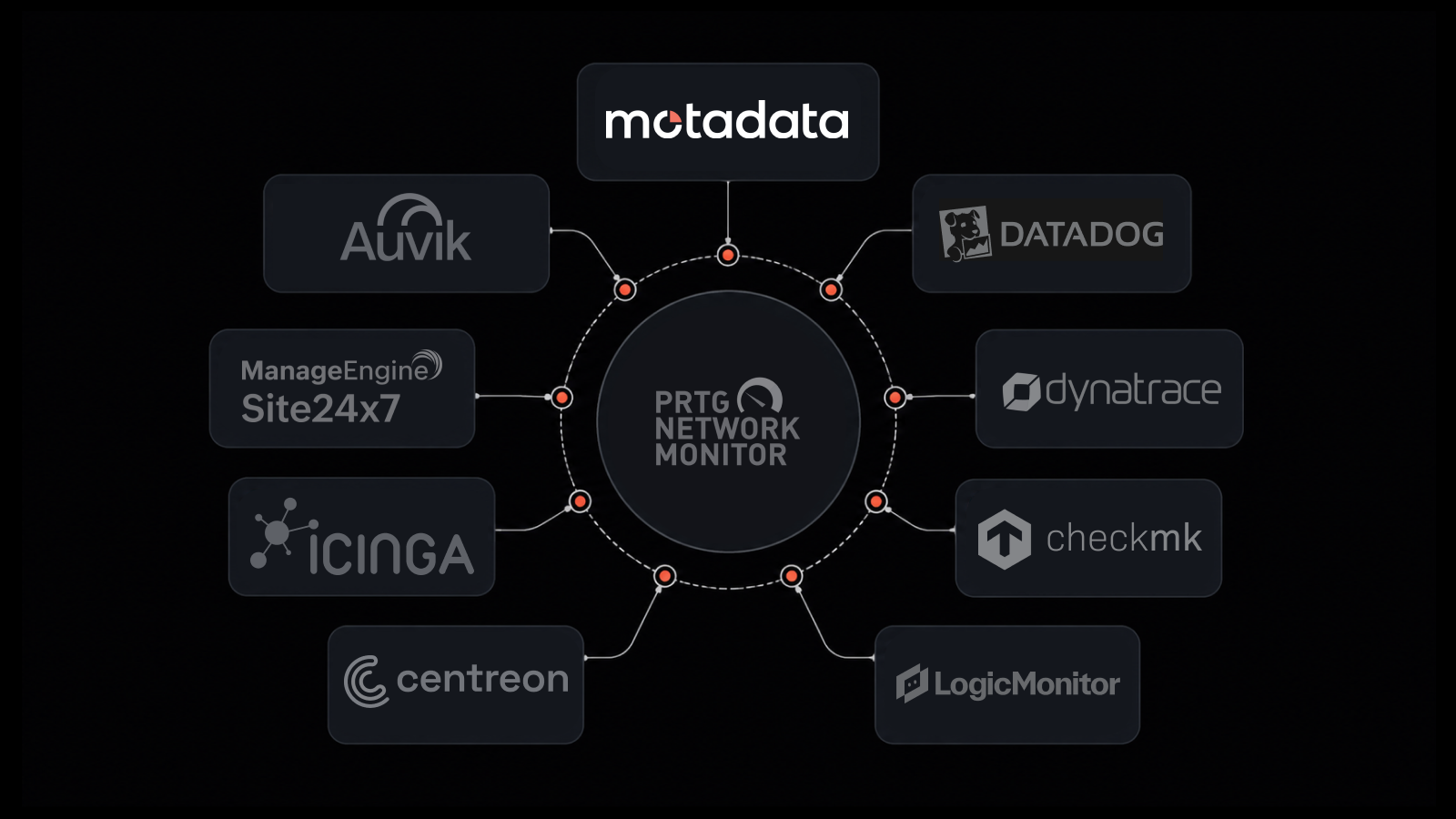

Monitor Kubernetes With Confidence Using Motadata

Motadata's AI-native observability platform delivers comprehensive Kubernetes monitoring across every layer — from nodes and control plane to applications and network traffic. With intelligent anomaly detection, predictive alerting, and unified dashboards, you get the visibility needed to operate complex K8s environments with confidence.

Don't let container complexity outpace your monitoring capabilities. Get full-stack Kubernetes observability from a single platform.

Explore Motadata AIOps | Request a Demo

FAQs

What is Kubernetes monitoring?

Kubernetes monitoring is the practice of collecting and analyzing metrics, logs, and traces from every component of a Kubernetes environment — nodes, pods, containers, control plane, applications, and network — to ensure performance, reliability, and security.

Why is Kubernetes monitoring important?

Kubernetes environments are dynamic and complex. Containers are ephemeral, services communicate across shifting topologies, and resource allocation changes constantly. Without monitoring, performance degradation, security threats, and resource waste go undetected until they cause outages or cost overruns.

What are the most popular Kubernetes monitoring tools?

Prometheus and Grafana remain the most widely adopted open-source stack. eBPF-based tools like Cilium and Pixie provide kernel-level observability. Cloud providers offer managed solutions (AWS CloudWatch, Azure Monitor, GCP Cloud Operations). AI-native platforms like Motadata provide unified, intelligent monitoring across all layers.

How does AI improve Kubernetes monitoring?

AI-powered monitoring learns baseline behavior, detects anomalies that rule-based alerts miss, correlates events across data sources to reduce noise, and provides predictive insights for capacity planning and proactive remediation.

What is eBPF and why does it matter for Kubernetes monitoring?

eBPF (Extended Berkeley Packet Filter) enables programs to run in the Linux kernel without modifying kernel source code. For Kubernetes monitoring, this means high-resolution visibility into network traffic, system calls, and process behavior with minimal performance overhead — something traditional agent-based monitoring can't achieve.

Author

Arpit Sharma

Senior Content Marketer

Arpit Sharma is a Senior Content Marketer at Motadata with over 8 years of experience in content writing. Specializing in telecom, fintech, AIOps, and ServiceOps, Arpit crafts insightful and engaging content that resonates with industry professionals. Beyond his professional expertise, he is an avid reader, enjoys running, and loves exploring new places.