How to Tackle Cloud Network Complexity With Observability

Arpit Sharma

Cloud adoption has fundamentally changed how networks behave. What used to be a manageable set of physical devices in a data center is now a sprawling web of microservices, containers, multi-cloud deployments, and ephemeral resources that spin up and down based on demand. When something breaks in this environment, traditional monitoring tools fall short. They can tell you that a metric crossed a threshold, but they can't explain why -- and in a distributed cloud network, the "why" is everything.

That's where observability comes in. Organizations that adopt observability-driven approaches report 60-70% reductions in mean time to resolution (MTTR), according to industry benchmarks. The difference isn't just speed -- it's the ability to diagnose issues you've never seen before, in systems that are constantly changing.

This guide explores how observability addresses cloud network complexity, why traditional monitoring methods fail in modern environments, and how to implement an observability strategy that keeps your cloud infrastructure performing at its best.

Cloud network observability is the practice of using metrics, logs, and traces to understand the internal state of a distributed cloud network. Unlike traditional monitoring that checks predefined conditions, observability enables teams to ask arbitrary questions about system behavior and find answers in real time.

Why Traditional Monitoring Fails in Cloud Networks

Cloud networks aren't just bigger versions of on-premises networks. They're architecturally different, and those differences break assumptions that traditional monitoring tools depend on.

Dynamic and Ephemeral Infrastructure

On-premises infrastructure is relatively stable. Servers, switches, and routers have fixed IP addresses and long lifecycles. Traditional monitoring tools register these devices once and track them over time.

Cloud infrastructure is the opposite. Auto-scaling groups add and remove instances based on load. Serverless functions exist only for the duration of a request. Containers launch, run, and terminate in seconds. Traditional tools that rely on static device inventories can't keep up -- by the time they discover a new resource, it may already be gone.

This gap means cloud teams face blind spots where infrastructure exists but isn't monitored, leading to missed failures and extended resolution times.

Distributed Dependencies Across Microservices

Modern cloud applications use microservices architectures where a single user request might traverse dozens of services, APIs, and databases. Each service can fail independently, and failures cascade in unpredictable ways.

Traditional monitoring tools were built for monolithic applications where the relationship between components was straightforward. In a microservices environment, you need to trace a request across every service it touches to understand where delays or errors originate. Without that end-to-end visibility, network troubleshooting becomes guesswork.

Multi-Cloud and Hybrid Visibility Gaps

Many organizations run workloads across multiple cloud providers (AWS, Azure, Google Cloud) alongside on-premises infrastructure. Each provider has its own monitoring tools, metrics formats, and APIs. Without a unified observability layer, teams end up context-switching between multiple consoles to investigate a single incident.

The result: fragmented data, inconsistent alerting, and longer resolution times because no single view shows the full picture.

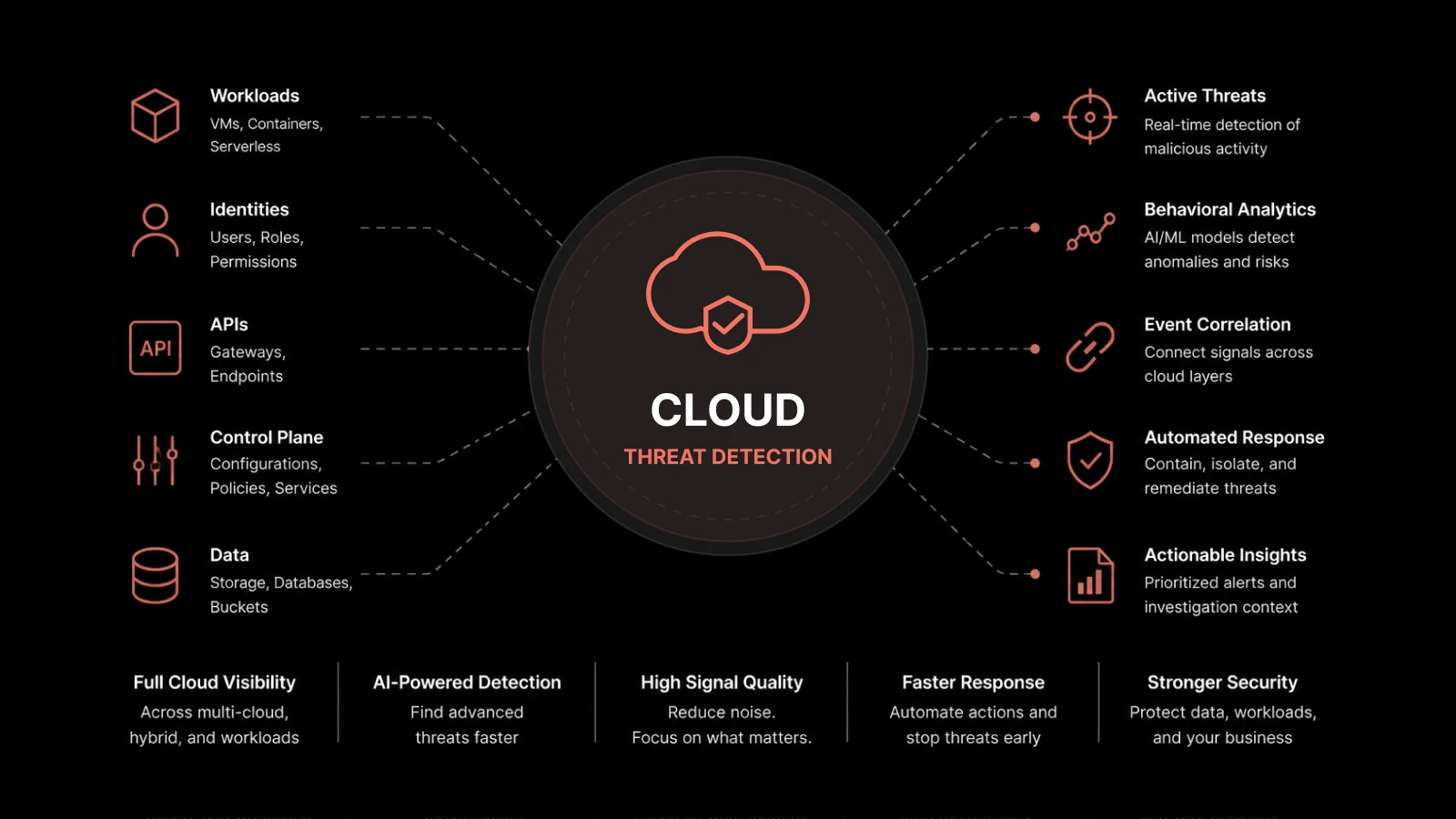

Evolving Security Threats

Cloud networks expand the attack surface. Ephemeral resources, API-driven access, and shared infrastructure create security challenges that static monitoring can't address. Identifying unusual access patterns, detecting lateral movement, and correlating security events across cloud boundaries requires the depth and flexibility that observability provides.

The Three Pillars of Observability

Observability rests on three data types, each providing a different perspective on system behavior. Individually, each pillar is valuable. Together, they provide the complete context needed to diagnose and resolve issues in distributed systems.

Metrics: Quantifying System Health

Metrics are numerical measurements collected at regular intervals that quantify system performance. Think of them as the vital signs of your network: latency, throughput, packet loss, error rates, CPU utilization, and memory consumption.

Metrics excel at answering "what" and "when" questions. They tell you that response latency increased by 40ms starting at 2:15 PM, or that packet loss spiked on a specific network segment. Real-time metrics enable teams to detect performance degradation quickly and trigger alerts based on threshold breaches or anomaly detection.

Key cloud network metrics to track include bandwidth utilization, connection counts, DNS resolution time, load balancer health, and inter-service latency.

Logs: Capturing Event Context

Logs are timestamped text records of events occurring within your systems. They capture the details that metrics can't -- error messages, stack traces, configuration changes, authentication events, and API call parameters.

Logs answer "what happened" in granular detail. When metrics show a spike, logs explain the cause: a misconfigured load balancer rejecting traffic, a deployment introducing a new error, or an authentication service timing out.

For effective log analysis, structured logging (JSON format) with consistent field names dramatically improves search and correlation speed. Unstructured text logs are still common but require more processing effort to extract actionable information. Network administrators should maintain access logs, error logs, application logs, and security logs in a centralized repository for efficient analysis.

Traces: Mapping Request Journeys

In distributed systems, traces follow a single request as it travels across services, APIs, databases, and network components. Each segment of the journey (called a "span") records timing, status, and metadata.

Traces answer "where" questions. When a user reports slow performance, traces show exactly which service in the chain is introducing delay. If Service A calls Service B, which queries a database, traces reveal whether the bottleneck is in Service A's processing, Service B's logic, or the database query itself.

Distributed tracing is the pillar that makes observability in microservices architectures possible. Without it, diagnosing latency or errors in a 20-service request chain is nearly impossible.

Correlating Pillars for Root Cause Analysis

Each pillar provides valuable information independently, but the real power of observability emerges when you correlate data across all three. Here's how correlation works in practice:

Metrics detect that API response latency increased by 200ms starting at 3:00 PM.

Logs reveal that a database connection pool started throwing timeout errors at 2:58 PM.

Traces show that all slow requests are bottlenecking at the same database query in Service B.

This correlated view identifies the root cause -- a database query performance issue -- in minutes rather than hours. Without correlation, each team would investigate their own data silo, potentially chasing symptoms rather than the cause.

Data correlation also accelerates root cause analysis by connecting related events across systems automatically, reducing the manual investigation work that extends MTTR.

Real-World Cloud Troubleshooting Scenarios

Scenario 1: Intermittent Connectivity Drops

An e-commerce platform experiences random checkout failures. Customers report errors during payment processing, but the failures are intermittent and don't appear in basic uptime checks.

Metrics show periodic packet loss spikes on the network segment connecting the application tier to the payment gateway. Logs reveal that a load balancer is intermittently rejecting traffic from specific backend instances due to health check failures. Traces confirm that the failed requests consistently route through two specific instances.

The root cause: a misconfigured health check threshold was marking healthy instances as unhealthy during brief CPU spikes caused by garbage collection pauses. Adjusting the health check sensitivity and tuning GC parameters resolved the issue.

Scenario 2: Security Anomaly Detection

A SaaS application's security team notices unusual login patterns in their SIEM system. Multiple accounts are experiencing failed login attempts from geographic locations that don't match their normal access patterns.

Logs show hundreds of failed authentication attempts using valid usernames but incorrect passwords -- a credential-stuffing attack using leaked credentials from another breach. Metrics confirm an abnormal spike in authentication service load. Traces reveal that the attack is targeting a legacy API endpoint that bypasses rate limiting.

The team blocks the attacking IP ranges, enforces rate limiting on the legacy endpoint, and enables mandatory MFA for affected accounts -- all within 45 minutes of initial detection.

Step-by-Step: Diagnosing Slow Application Performance

When users report slow page loads, here's a systematic observability-driven approach:

Step 1: Check performance metrics for increased latency in API response times. Identify when the degradation started and which endpoints are affected.

Step 2: Use distributed tracing to follow slow requests through your service chain. Filter by high-latency requests and identify which service or database call introduces the most delay.

Step 3: Review resource utilization metrics (CPU, memory, network) on the identified services. Look for resource contention or capacity limits.

Step 4: Examine application and server logs for the identified time window. Look for error messages, slow query warnings, or connection pool exhaustion.

Step 5: Correlate findings to identify the root cause. Apply the fix -- whether it's query optimization, resource scaling, configuration change, or code deployment.

Step 6: Re-run distributed traces to confirm the fix resolved the issue and latency has returned to baseline.

Best Practices for Implementing Cloud Network Observability

Instrument Everything That Matters

Observability starts with instrumentation -- embedding monitoring capabilities into your applications, infrastructure, and network components. Prioritize instrumenting customer-facing services, inter-service communication paths, and any component that has historically caused incidents.

Use standardized telemetry frameworks where possible. OpenTelemetry has emerged as the industry standard for generating and collecting metrics, logs, and traces in a vendor-neutral format.

Aggregate and Centralize Data

Observability data from multiple cloud providers, on-premises systems, and applications needs to flow into a centralized platform. Data fragmentation across multiple tools defeats the purpose of observability. Centralized aggregation enables cross-system correlation and eliminates the need to context-switch between consoles during incident investigation.

Set Up Intelligent Alerting

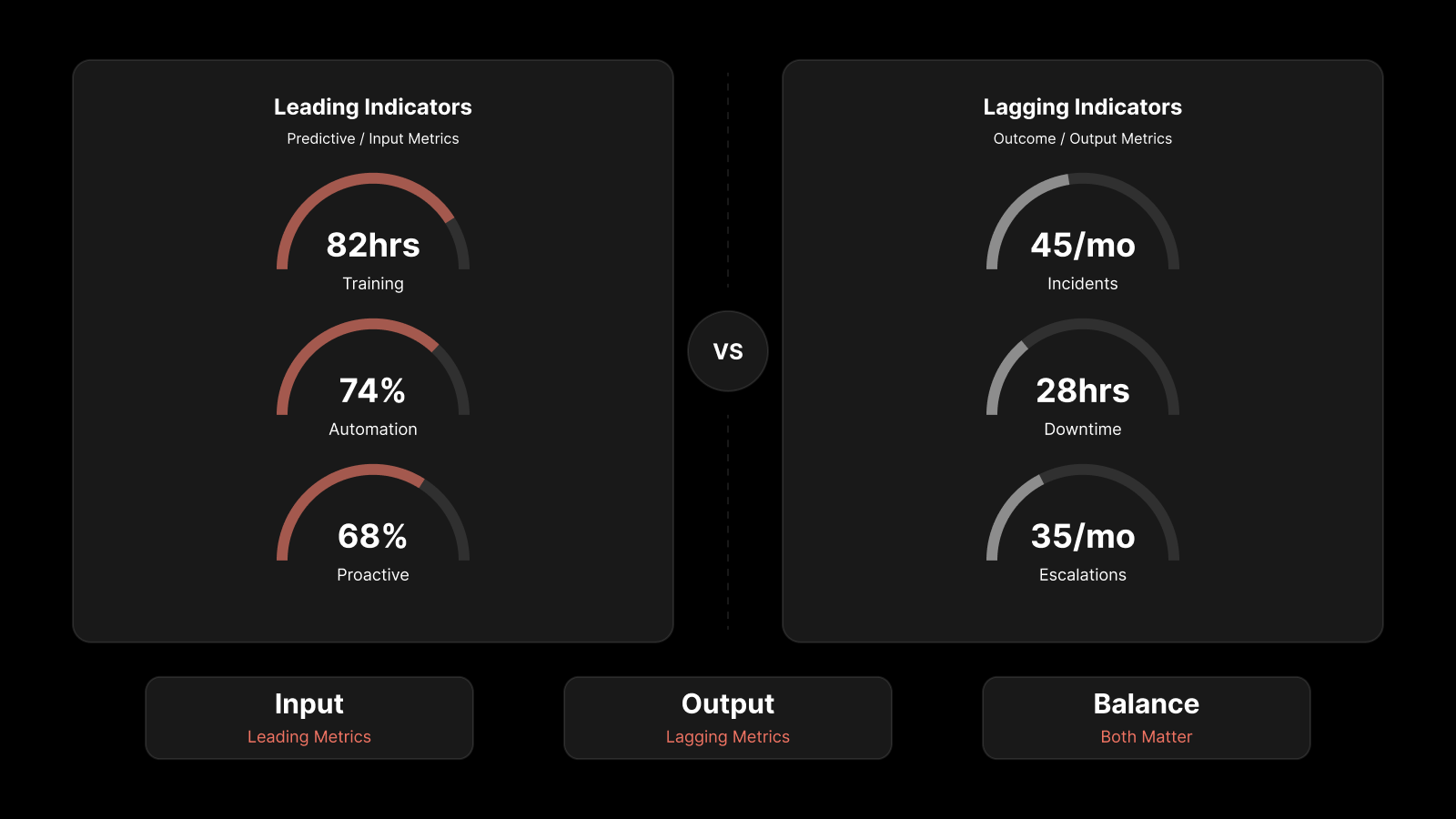

Move beyond static threshold alerts. Configure anomaly-based alerting that learns normal behavior patterns and flags deviations. Set up proactive monitoring that alerts on leading indicators of problems -- not just lagging indicators that confirm an outage is already in progress.

Automated response scripts and playbooks can handle common remediation actions (scaling resources, restarting services, rerouting traffic) without waiting for human intervention on network devices.

Break Down Team Silos

Observability data is most valuable when it's shared across development, operations, and security teams. Shared dashboards, unified alerting channels, and collaborative investigation tools ensure that all teams have access to the same data. This shared visibility reduces finger-pointing during incidents and accelerates resolution by bringing diverse expertise to the investigation.

People Also Ask

What's the difference between monitoring and observability?

Monitoring checks known conditions against predefined thresholds -- it answers "is this metric within range?" Observability enables you to understand why something is happening by exploring metrics, logs, and traces together. Monitoring tells you that a problem exists; observability helps you understand its cause, even for issues you haven't encountered before.

How does observability reduce MTTR?

Observability reduces MTTR by eliminating the investigation time spent searching for relevant data. When metrics, logs, and traces are correlated and accessible from a single platform, engineers can identify root causes in minutes instead of hours. Automated anomaly detection also catches issues earlier in their lifecycle, often before users notice impact.

Do I need observability for a small cloud deployment?

Even small cloud deployments benefit from observability fundamentals. The three-pillar approach scales down well -- start with basic metrics and centralized logging, then add distributed tracing as your architecture grows more complex. Building observability practices early prevents the painful retrofit that comes with adding instrumentation to a large, mature system.

What's the relationship between observability and AIOps?

AIOps applies artificial intelligence and machine learning to IT operations data -- including observability data. Observability provides the raw telemetry (metrics, logs, traces) that AIOps platforms analyze to detect anomalies, predict failures, and automate remediation. Think of observability as the data foundation and AIOps as the intelligence layer built on top.

Cut Through Cloud Complexity With Motadata

Motadata's AI-native network monitoring and observability platform unifies metrics, logs, and traces into a single view of your cloud and hybrid infrastructure. With machine learning-powered anomaly detection, automated root cause correlation, and real-time dashboards built for practitioners, Motadata helps IT teams move from reactive firefighting to proactive operations. Whether you're managing a single cloud environment or a multi-cloud hybrid deployment, Motadata delivers the visibility you need to reduce MTTR, improve network performance, and stay ahead of issues. Start a free trial and experience observability-driven cloud infrastructure management.

FAQs

What is cloud network observability?

Cloud network observability is the practice of collecting and correlating metrics, logs, and traces from cloud infrastructure to understand the internal state of distributed systems. It goes beyond traditional monitoring by enabling teams to diagnose unforeseen issues, trace requests across microservices, and identify root causes in complex, dynamic environments.

Why do traditional monitoring tools fail in cloud networks?

Traditional tools were built for static, on-premises infrastructure with fixed device inventories. Cloud environments are dynamic -- resources scale automatically, containers are ephemeral, and workloads span multiple providers. Traditional tools can't discover, track, or correlate data across this constantly changing landscape.

What are the three pillars of observability?

The three pillars are metrics (numerical measurements of system performance), logs (timestamped records of events), and traces (end-to-end maps of request journeys across distributed services). Each pillar provides a different perspective, and correlating all three enables complete root cause analysis.

How do I get started with observability for my cloud network?

Start by instrumenting your most critical services with metrics collection and centralized logging. Add distributed tracing for services involved in complex request chains. Choose an observability platform that correlates data across all three pillars and supports your cloud providers. Build shared dashboards for cross-team visibility and configure anomaly-based alerting to catch issues early.

How does observability help with cloud security?

Observability enables security teams to detect unusual patterns -- anomalous login behavior, unexpected network traffic, unauthorized API access -- by correlating security-relevant data across metrics, logs, and traces. This cross-system visibility catches threats that siloed security tools miss, especially in multi-cloud environments with distributed attack surfaces.

Author

Arpit Sharma

Senior Content Marketer

Arpit Sharma is a Senior Content Marketer at Motadata with over 8 years of experience in content writing. Specializing in telecom, fintech, AIOps, and ServiceOps, Arpit crafts insightful and engaging content that resonates with industry professionals. Beyond his professional expertise, he is an avid reader, enjoys running, and loves exploring new places.