Mean Time Between Failures (MTBF): Formula, Examples, and How to Improve System Reliability

What's the one metric that always comes up when you talk about reliability and uptime? MTBF. It's used across SRE, engineering, IT operations, and infrastructure teams worldwide. Mean time between failures helps management understand how dependable their systems are and, critically, how they're likely to fail.

A higher MTBF means the system is durable with minimal failures and fewer incidents. It translates to more predictable performance without unexpected disruptions. That's why MTBF remains a cornerstone for assessing operational risk, reliability, and service continuity.

What Is MTBF (Mean Time Between Failures)?

Mean Time Between Failures (MTBF) is the average time a system functions normally before eventually breaking down. It answers one fundamental question: how long does the system operate between one failure and the next?

It's important to understand what MTBF isn't. It's not an availability metric. It's not a performance metric. And it doesn't tell you how long recovery takes -- that's the job of MTTR (Mean Time to Repair). MTBF strictly measures reliability by answering:

How often do failures occur?

Has the system become more or less stable over time?

Are your reliability improvements actually working?

Any repairable system that returns to service after failure can be evaluated using MTBF. That includes:

Compute infrastructure and servers

Network devices and communication systems

Middleware and databases

Enterprise applications

Why MTBF Matters in IT Operations

MTBF isn't just another metric. It's a scale that directly influences business performance, operational costs, and customer experience. Understanding MTBF trends helps teams gauge the actual health of their systems and whether they're moving toward stability.

Impact on system availability. Teams that achieve higher MTBF see fewer frequent outages, enhanced uptime, and better infrastructure predictability. Frequent incidents don't just create downtime -- they create friction in user experience. MTBF identifies the spots that drive instability and helps prevent recurring breakpoints.

Impact on service reliability. When IT operations teams understand MTBF trends, they can enhance system reliability by predicting failure behavior, identifying weak components, and detecting early warning signs of instability. Better MTBF leads to fewer incidents, fewer escalations, and more controlled environments.

Impact on user experience. From a user's perspective, frequent minor failures often feel worse than rare major ones. Reliability strongly influences trust and perception. A stable system with fewer disruptions -- even if some failures take time to repair -- typically delivers a better overall experience.

Impact on operational costs. A low MTBF drives up incident response workload, engineering fatigue, support and maintenance costs, and SLA risk exposure. Improving MTBF reduces firefighting and shifts teams toward proactive work, enabling better incident management metrics like MTTR, MTTF, and MTTA.

Different stakeholders view MTBF through different lenses:

SRE teams evaluate reliability patterns and risk

IT operations teams track infrastructure stability

Business leaders assess continuity and uptime impact

MTBF Formula: How to Calculate Mean Time Between Failures

Calculating MTBF requires just two inputs: total uptime and number of failures.

MTBF = Total Uptime / Number of Failures

Important: uptime should not include downtime in the calculation. MTBF only counts the duration between recovery from one failure and the occurrence of the next.

TBF Calculation Example

Suppose a production server runs for 600 hours in a given month and experiences 6 failures.

MTBF = 600 / 6 = 100 hours

This means the server fails, on average, once every 100 hours.

How teams should interpret this:

If MTBF increases month over month, system reliability is improving

If MTBF steadily drops, instability is rising and should be investigated

MTBF is most valuable when viewed as a trend over time, not as a one-time snapshot.

Common Mistakes When Calculating MTBF

Even though the formula is simple, teams frequently get it wrong. Here are the mistakes that skew results:

Adding Downtime to Uptime

MTBF should only include the period while the system was operational. Adding downtime inflates the number and masks the real failure frequency.

Using MTBF for Non-Repairable Systems

MTTF (Mean Time to Failure) is the correct metric for assets that can't be returned to service. MTBF applies exclusively to systems that get repaired and put back into operation.

Confusing MTBF With Availability

MTBF and MTTR both affect availability, but they measure different things. MTBF tracks how often failures happen. Availability combines MTBF with recovery speed. With effective AIOps solutions, businesses achieve faster mean time to repair, enhancing the end-user experience across both dimensions.

What Counts as a Good MTBF Value?

There's no universal benchmark. A "good" MTBF depends on:

Industry and regulatory expectations

System type and workload profile

Business criticality and tolerance for downtime

Some general patterns:

Network devices -- often measured in hundreds or thousands of hours

Enterprise applications -- typically days or weeks between failures

SaaS platforms -- aim for continuous improvement using redundancy

Instead of targeting a fixed number, teams should focus on long-term trend improvement, reduction in failure frequency, and identifying root causes that affect stability. MTBF becomes meaningful only when evaluated alongside system context.

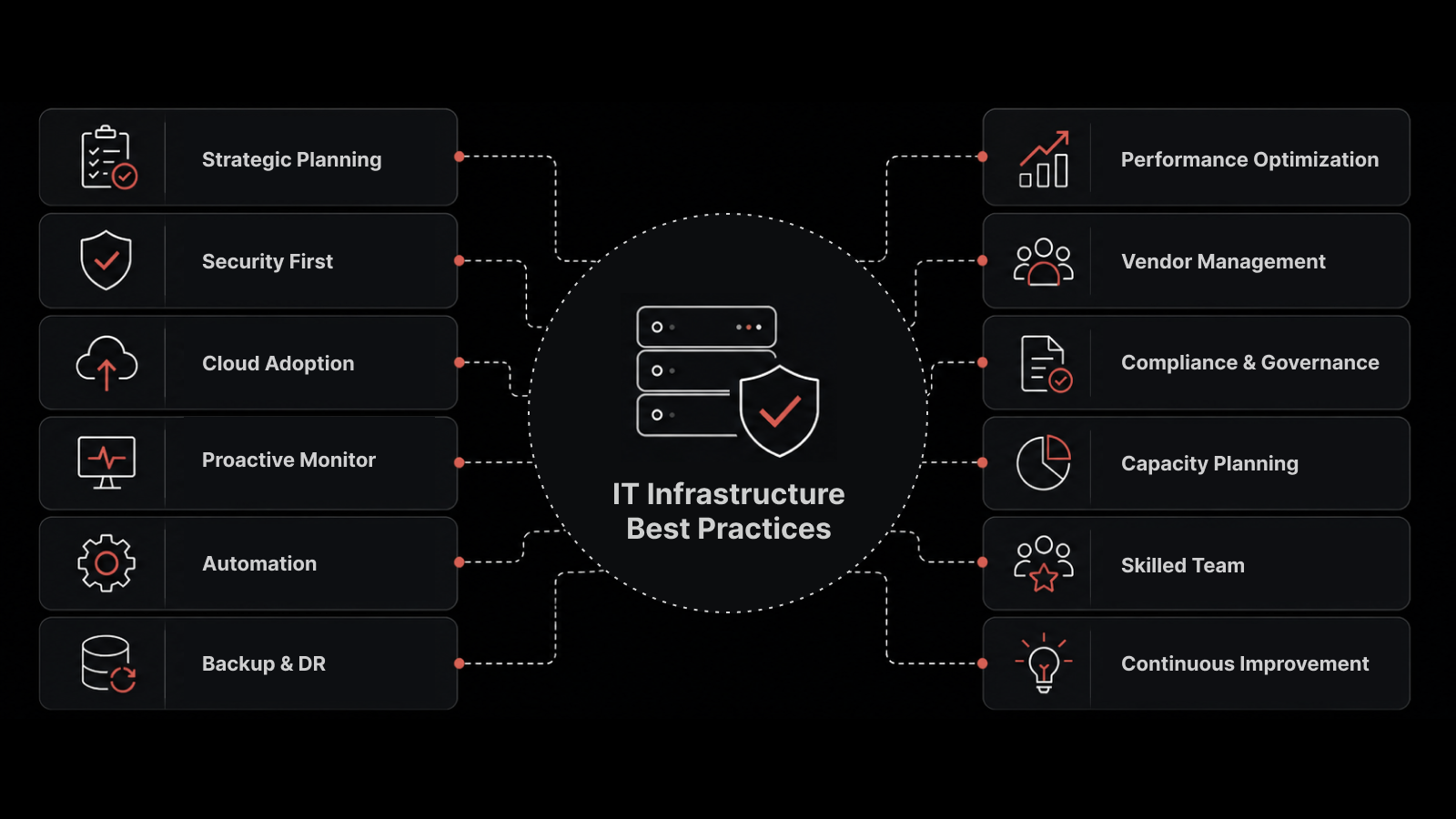

How to Improve MTBF in IT Systems

Chasing a higher MTBF number without improving the underlying practices doesn't produce sustainable results. Here are the approaches that actually move the needle.

Proactive Monitoring and Alerting

Like every IT operations monitoring system, early detection is the key to preventing failures before they escalate. Effective proactive monitoring includes:

Anomaly detection that identifies deviations before they become incidents

Performance threshold tracking that triggers alerts at the right sensitivity level

Log and event correlation that connects dots across infrastructure layers

Better visibility enables teams to intervene before failures occur -- not after.

Root Cause Analysis of Recurring Failures

Recurring incidents almost always point to deeper issues: architectural weaknesses, misconfigurations, capacity limits, or fragile integrations. Instead of just correcting each incident, teams should implement automation and improve response time while also:

Analyzing historical failure data for patterns

Identifying systemic root causes across related incidents

Removing underlying causes permanently

Sustainable MTBF improvement comes from eliminating repeat failures, not just reacting faster.

Preventive Maintenance and Automation

Proactive maintenance stabilizes systems, especially in complex, infrastructure-heavy environments. This includes:

Regular patching on a predictable schedule

Configuration hygiene and drift detection

Dependency updates before they become vulnerabilities

Automated routine checks that catch issues without human intervention

Automation reduces human error -- one of the most common contributors to outages.

Learning From Historical Failure Data

Trend analysis helps IT teams understand patterns and anticipate failures before they happen:

Recognize recurring triggers and seasonal patterns

Forecast weak points based on historical degradation curves

Prioritize reliability investments where they'll have the greatest impact

Over time, teams who focus on improving MTBF shift from reactive firefighting to proactive resilience building.

Limitations of MTBF

MTBF's biggest shortcoming is that it can be misleading when viewed in isolation. It doesn't capture the full picture when:

Failures occur in clusters at a rapid pace

Downtime severity varies widely across the infrastructure

Systems experience degraded performance for extended periods without fully failing

To build a complete reliability picture, MTBF should be evaluated alongside:

MTTR (Mean Time to Repair) -- how quickly you recover

Recurring error rates -- whether the same issues keep coming back

Availability metrics -- what percentage of time the system is operational

Incident severity distribution -- how impactful each failure is

Reliability is multidimensional. MTBF is a vital part of it -- not the whole story.

How Motadata Helps You Track and Improve MTBF

Motadata's AI-native incident management platform gives IT teams the tools to measure MTBF accurately and improve it systematically:

Proactive monitoring with anomaly detection that identifies failure patterns before they escalate

Automated root cause analysis that correlates events across infrastructure layers

Historical trend analysis that reveals MTBF patterns over time and forecasts reliability risks

Integrated incident workflows that connect MTBF tracking with MTTR, availability, and SLA management

When you combine proactive monitoring with MTBF analysis, preventive maintenance, and structured incident management discipline, you achieve fewer recurring failures, a more stable production environment, improved service reliability, and a better user experience.

Enhancing MTBF isn't just a technical exercise. It's a consistent, cross-functional effort that increases operational confidence and gives new direction to business flexibility.

Track MTBF effectively with Motadata's proactive monitoring and incident management platform.

FAQs

What does MTBF stand for?

MTBF stands for Mean Time Between Failures. It represents the average time a repairable system operates normally between one failure and the next.

How do you calculate MTBF?

MTBF is calculated by dividing the total operational uptime by the number of failures during that period: MTBF = Total Uptime / Number of Failures. Only operational time counts -- downtime should be excluded.

Is MTBF the same as availability?

No. MTBF measures how often failures occur, while availability also depends on how quickly systems recover, which is measured using MTTR. A system can have high MTBF but still have low availability if each recovery takes a long time.

What is a good MTBF value?

A good MTBF depends on the system type, industry, and business criticality. Instead of targeting a fixed number, teams should focus on improving MTBF trends over time and identifying root causes that drive instability.

How can IT teams improve MTBF?

IT teams can improve MTBF by using proactive monitoring, performing root cause analysis on recurring incidents, reducing change-related failures through controlled change management, automating maintenance tasks, and analyzing historical failure data to forecast and prevent future issues.

What is the difference between MTBF and MTTF?

MTBF applies to repairable systems -- it measures the average time between failures for systems that get fixed and returned to service. MTTF (Mean Time to Failure) applies to non-repairable systems or components -- it measures how long a system operates before its first (and final) failure.

How does MTBF relate to SLA compliance?

MTBF directly impacts SLA compliance because service level agreements typically include uptime commitments. A declining MTBF signals increased failure frequency, which puts SLA targets at risk. Teams that monitor MTBF trends can proactively address reliability issues before they trigger SLA violations.

Author

Arpit Sharma

Senior Content Marketer

Arpit Sharma is a Senior Content Marketer at Motadata with over 8 years of experience in content writing. Specializing in telecom, fintech, AIOps, and ServiceOps, Arpit crafts insightful and engaging content that resonates with industry professionals. Beyond his professional expertise, he is an avid reader, enjoys running, and loves exploring new places.